What began as a 7-step automated video pipeline has grown into a formidable 24-step AI production studio. Showspring now essentially replaces an entire team of 10–14 specialists—writers, animators, editors, and social managers—reducing production time from weeks to a single, highly-automated pass.

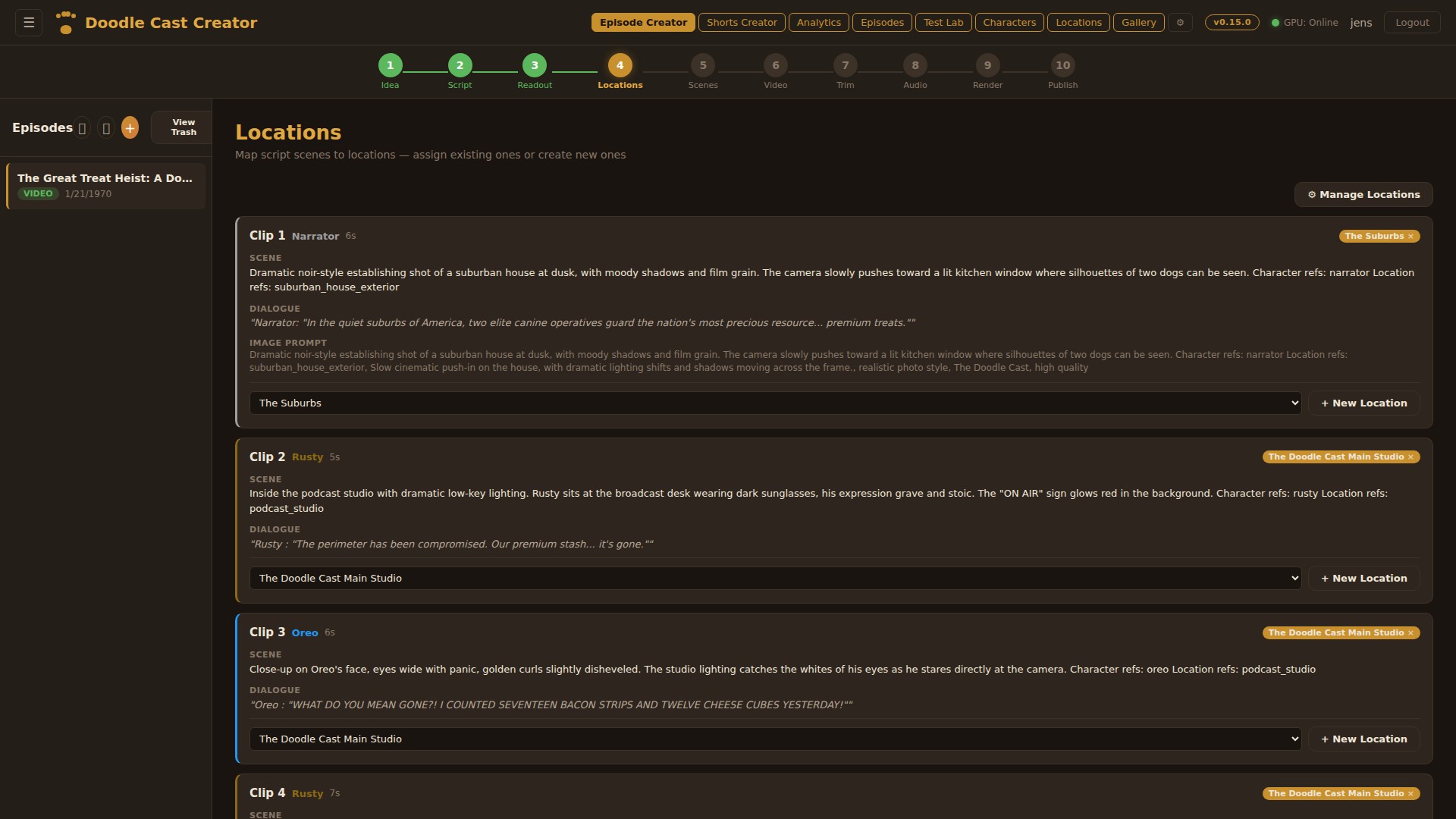

The New 10-Step Core Episode Pipeline

The episode creation process is more granular, offering unprecedented control over the final output:

- Creative Director: Three AI personas (Llama, Gemma, Qwen) brainstorm concepts, while Grok 4 acts as a judge to pick the absolute best idea.

- Script Writer: Generates a full script with structured clips, dialogue, and scene descriptions, heavily referencing our internal "Show Bible".

- Voice Readout: Uses ElevenLabs to synthesize distinct character voices, allowing us to verify pacing and flow instantly.

- Location Mapping: Automatically extracts locations from the script and maps them to a reusable visual library with reference images.

- Scene Generation: Generates photorealistic images for each clip using models like Z-Turbo, Gemini, or ComfyUI.

- Video Generation: Transforms those static images into high-quality motion using Google Veo (Cloud) or local models like WAN 2.2 and LTX 2.3 on our local RTX 5090.

- Trim Editor: Fine-tunes clips with frame-accurate in/out markers, just like a traditional NLE.

- Audio Mix: A comprehensive 4-track editor handling background audio, voice, SFX, and music with keyframe automation.

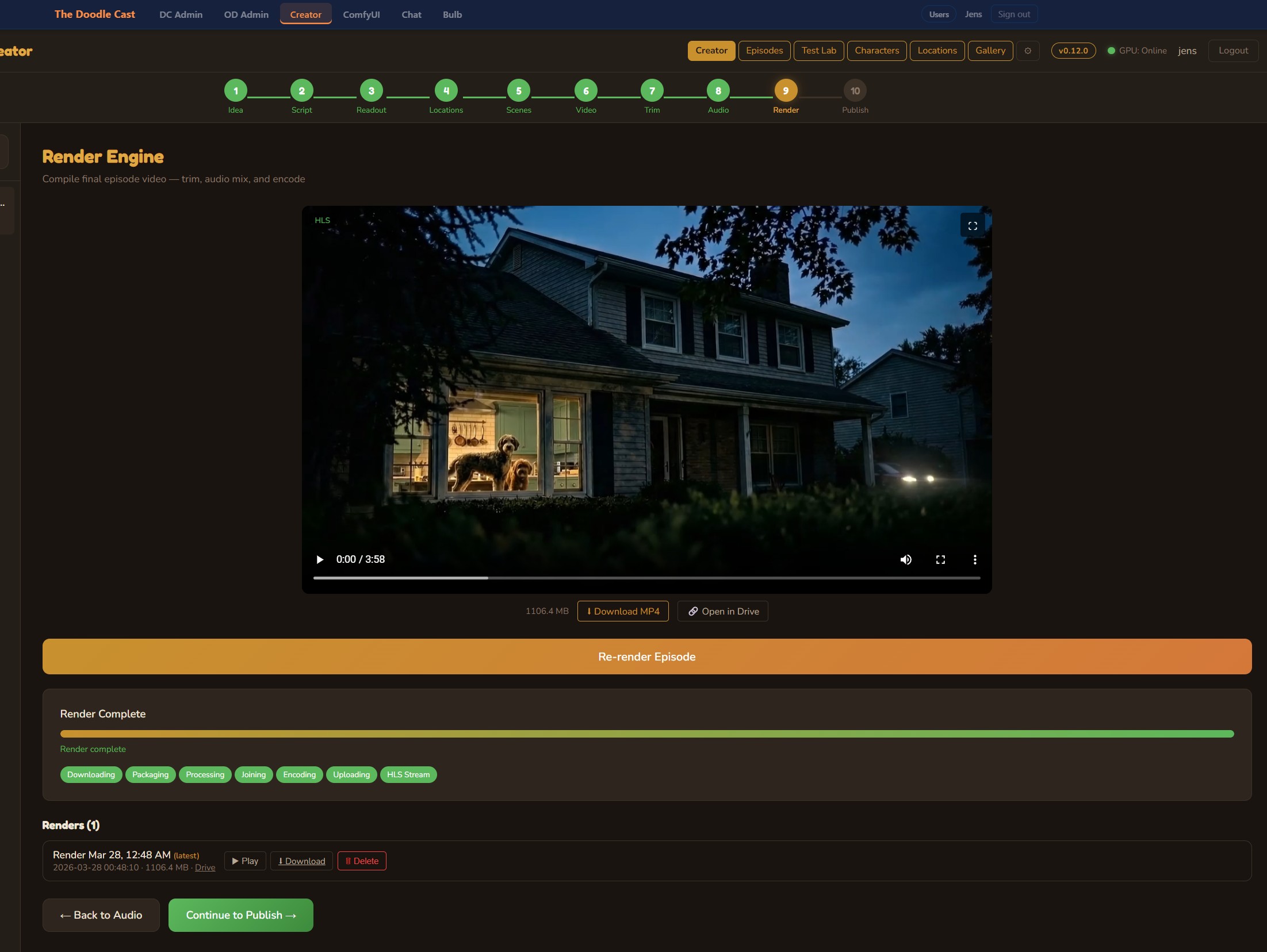

- Render Engine: Uses dynamically generated ffmpeg filter graphs to composite, concatenate, and encode the final MP4 using NVENC/H.264.

- Publish to YouTube: Auto-generates AI thumbnails and metadata, publishing directly via the YouTube API.

Advanced Specialized Pipelines

Beyond standard episodes, Showspring now boasts specialized workflows tailored to specific content formats:

- YouTube Shorts & Visual Calendar (v1.5): A batch pipeline for vertical shorts featuring a global drag-and-drop visual content calendar, live status refresh, and multi-platform publishing (TikTok, IG Reels, X, FB) with HEVC to H.264 transcoding.

- Podcast Creator & Voice Studio: A multi-character conversational pipeline featuring a dedicated Voice Studio with interactive waveforms, VU meters, and percentage-based SVG volume envelopes.

- OTIO & Resolve Agent (v2.0): Advanced NLE integration featuring a Windows system-tray agent, live polling, server-side ProRes 4444 still encoding, and automated 4K Over-The-Shoulder (OTS) graphic merges.

- Audience Reactions (v1.4): Modeled on the "SNL Weekend Update" vibe, this layer uses a multi-take stem library and natural tail bleeds to make the audio feel incredibly alive and reactive.

The Hybrid AI & Infrastructure Model

The true magic of Showspring remains our Hybrid Infrastructure. We route tasks demanding absolute peak intelligence or cloud-native capabilities (Google Veo, Gemini 3 Pro, Claude Opus 4.7, ElevenLabs) to external APIs.

Conversely, we tunnel heavy generative workloads (WAN 2.2, LTX 2.3, Z-Turbo) over an SSH tunnel directly to our local RTX 5090 rig. This compute-balanced approach allows us to achieve Hollywood-level AI production without astronomical cloud GPU bills.

AI Coordination & Security

As Showspring's complexity grows, we increasingly rely on multiple AI engineering agents working on the codebase simultaneously. We implemented Cooperative File Locks and automated pre-push lint hooks. This ensures that while one agent is refactoring the frontend, another agent working on the Python render server won't trigger conflicting git states. We've also implemented strict server-side state locks to prevent accidental overwrites of live content.

What's Next?

Showspring is pushing the absolute boundaries of what a single-engineer-expert system can achieve. By treating content creation as a software engineering and orchestration problem, we are defining the future of AI-native media production.

Discussion

Be the first to comment