Showspring

How Showspring got built. The product lives at showspring.com — this page is the engineering side of the story, using The Doodle Cast as the worked example. Every pipeline step, every model, every decision we made along the way.

This full episode of The Doodle Cast was produced end-to-end by Showspring — from idea to YouTube publish.

What’s Inside

16 SectionsAI scoreboard — three models, six categories

Three leading AI models were given full architectural context — codebase stats, feature set, pipeline details, and market positioning — and asked to score Showspring across six categories from 1 to 10 with a short comment per score. Select a model below to see its scoreboard.

Three models re-ran their independent evaluation against the current build state — v1.5 (channel health: anti-slop hardening, burned-in captions, retention feedback loop, top-level calendar, lock-for-editing) and v2.0 (DaVinci Resolve OTIO integration: five-level integration ladder, single manifest contract, pystray daemon, round-trip via markers). Scores below are unedited responses to identical prompts.

April 28, 2026 (v1.5 + v2.0 refresh)

Showspring’s vision of a fully automated, end-to-end AI pipeline from idea to published video is ambitious and forward-thinking, positioning it as a complete production studio for episodic content.

The fragmented AI video tool market presents a strong opportunity for a comprehensive pipeline like Showspring, especially with growing demand for quick content creation, though YouTube’s penalties on AI content pose a notable challenge.

Showspring stands out with its unique full-stack pipeline, anti-slop hardening, and retention feedback loop, offering a defensive advantage against competitors’ fragmented or single-purpose tools.

Features like the top-level calendar, lock-for-editing, and opt-in schedulers suggest a polished workflow, but the heavy reliance on technical integrations may overwhelm non-expert users without more intuitive onboarding.

The hybrid cloud-local setup with robust services, hermetic testing, and health-driven fallbacks demonstrates a scalable and resilient architecture tailored for AI video production.

With 113 integration tests, non-destructive caching, and careful handling of edge cases like API limits and antivirus flags, the implementation shows high rigor and attention to reliability.

Heavy dependency on third-party AI services like Veo and Gemini could lead to disruptions if APIs change or costs rise unexpectedly. YouTube’s penalties for AI content might undermine the retention feedback loop and limit distribution effectiveness despite defensive measures. Scalability for high-volume users could strain the VPS server and local GPU setups, potentially causing performance bottlenecks without further optimization.

April 28, 2026 (v1.5 + v2.0 refresh)

The vision for a complete idea-to-analytics production flywheel is exceptionally clear, ambitious, and comprehensive.

The market for automated content is massive, but success is heavily constrained by platform risk from AI detection and the high bar for audience acceptance.

Its fully-integrated, end-to-end pipeline with professional workflow support creates a powerful moat against the fragmented landscape of single-purpose AI tools.

The suite of described workflow features, from a master calendar to deep editor integration, suggests a powerful and polished UX designed for serious creators.

A pragmatic and resilient hybrid cloud/local architecture demonstrates mature engineering, balancing cutting-edge models with cost-effective local processing.

The extreme level of detail, from specific API workarounds to a hermetic testing pipeline, indicates an exceptionally rigorous and professional implementation.

The primary risks are platform dependency, where channels like YouTube may penalize AI content, and creative quality, as the ability to consistently generate audience-retaining content remains unproven at scale. Furthermore, reliance on third-party models creates vulnerability to their API costs, performance, and strategic shifts.

April 28, 2026 (v1.5 + v2.0 refresh)

End-to-end idea-to-published is shipping daily on a real channel; the open question is whether episodic AI content scales as a category beyond the worked example.

The integrated-pipeline gap is real, but YouTube’s aggressive AI penalty and the volatility of consumer creator tools make this a sizeable-but-risk-adjusted opportunity.

Integration depth (24-step pipeline + show-bible continuity + retention feedback loop + Resolve hatch) is genuinely defensible; well-funded competitors could replicate the surface in 6–12 months but not the operational scar tissue.

Calendar drill-down, lock-for-editing, and burned-in captions land cleanly for a power user; recent silent-failure regressions in render and metadata paths show the surface still needs rough-edge cleanup before a stranger can drive it.

Composition root + 22 routers + hermetic test pipeline + non-destructive cache + health-driven hybrid routing is unusually mature for this product stage.

113-case integration suite, archive-before-evict cache, layered rate limiting, Resolve API survival kit — all production-scarred; refactor regressions on missing imports landing in hot paths suggest the test surface needs depth on composition seams.

Third-party AI dependency is the headline operational risk — Veo, Gemini, ElevenLabs pricing or access shifts directly impact daily production. YouTube’s AI-detection arms race could nullify channel reach even with anti-slop hardening. The implementation surface keeps surfacing import and composition regressions in production hot paths, which is a sign the test suite needs deeper coverage at module seams.

AI video tools generate clips. We produce episodes.

Tools like Veo, Kling, Higgsfield, and Firefly are remarkable at generating individual video clips. But producing a complete YouTube episode — with narrative structure, multi-character dialogue, consistent visuals, sound design, and music — still takes dozens of hours of manual stitching, editing, and rendering. Showspring eliminates that gap entirely.

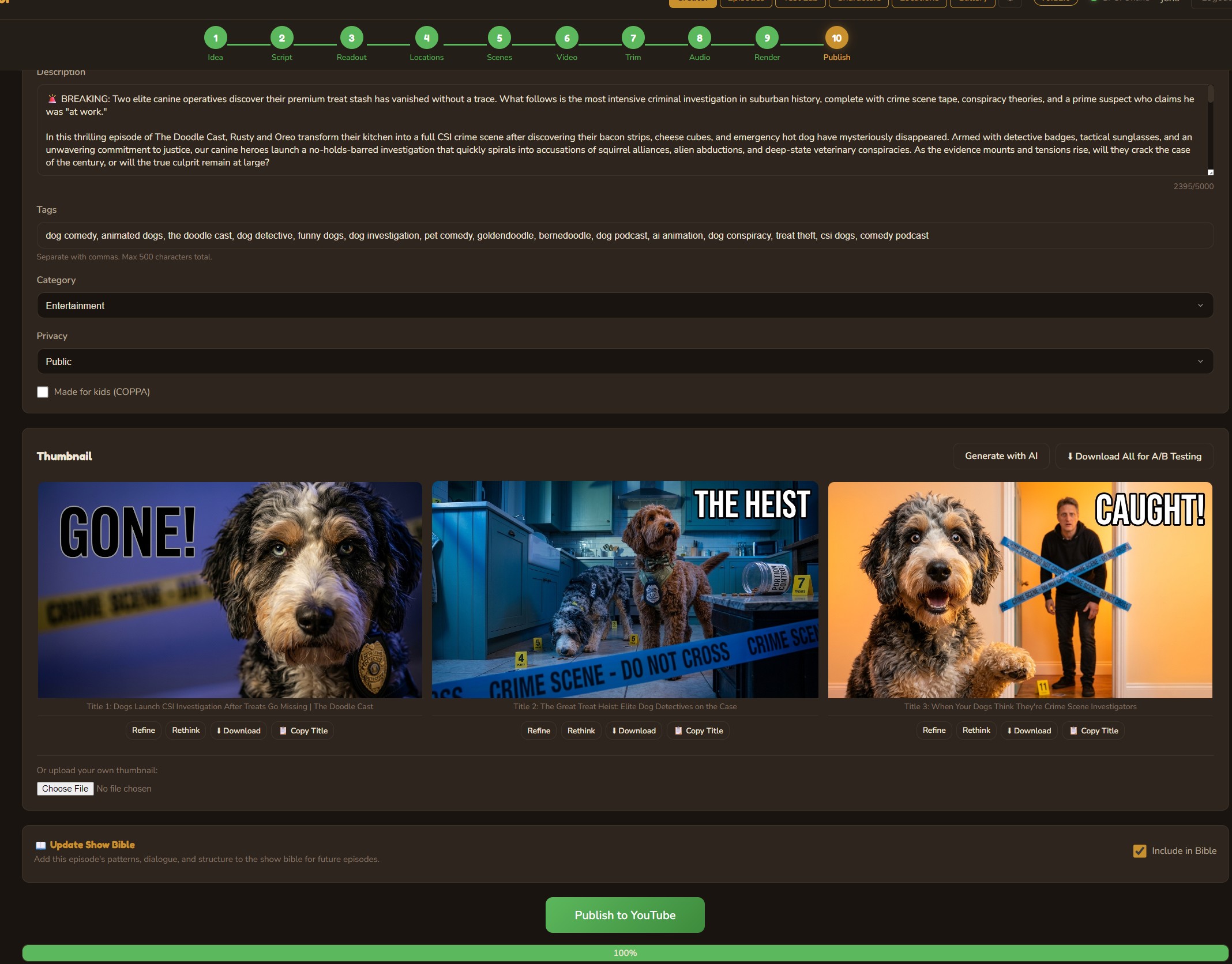

From idea to YouTube in 10 steps

Every step of the episode production pipeline — from brainstorming to final publish — is handled by a unified interface with AI assistance at every stage. A parallel Shorts pipeline adds 7 more steps for vertical content.

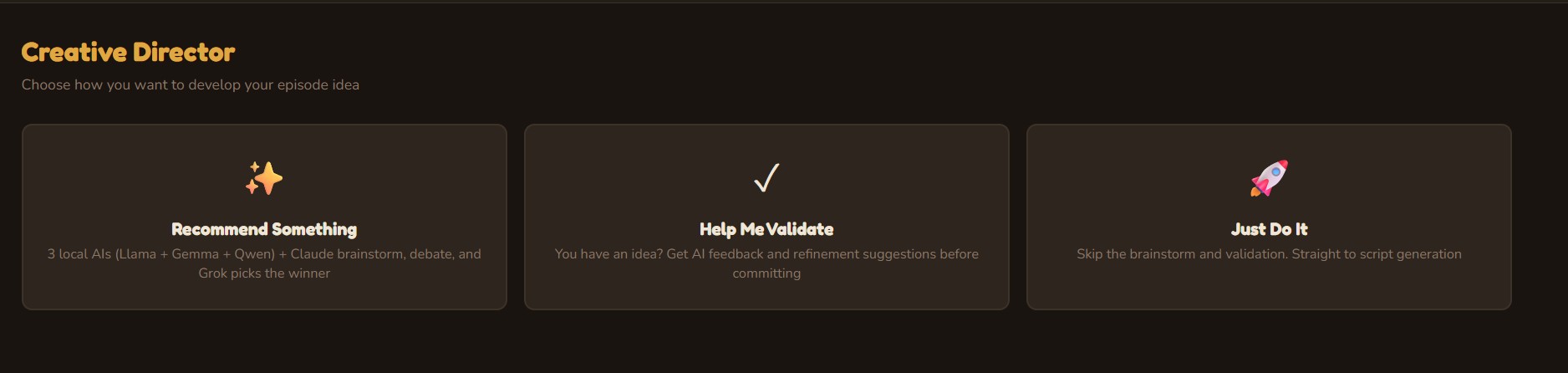

Creative Director

Three AI personas independently brainstorm episode concepts, then debate their merits. A judge AI (Grok 4) evaluates each pitch with live web search for topical relevance and selects a winner — or you bring your own idea and let the panel validate it. v1.2 adds a Research & Debate mode where Grok 4 conducts deep web research to build fact-heavy, current scripts.

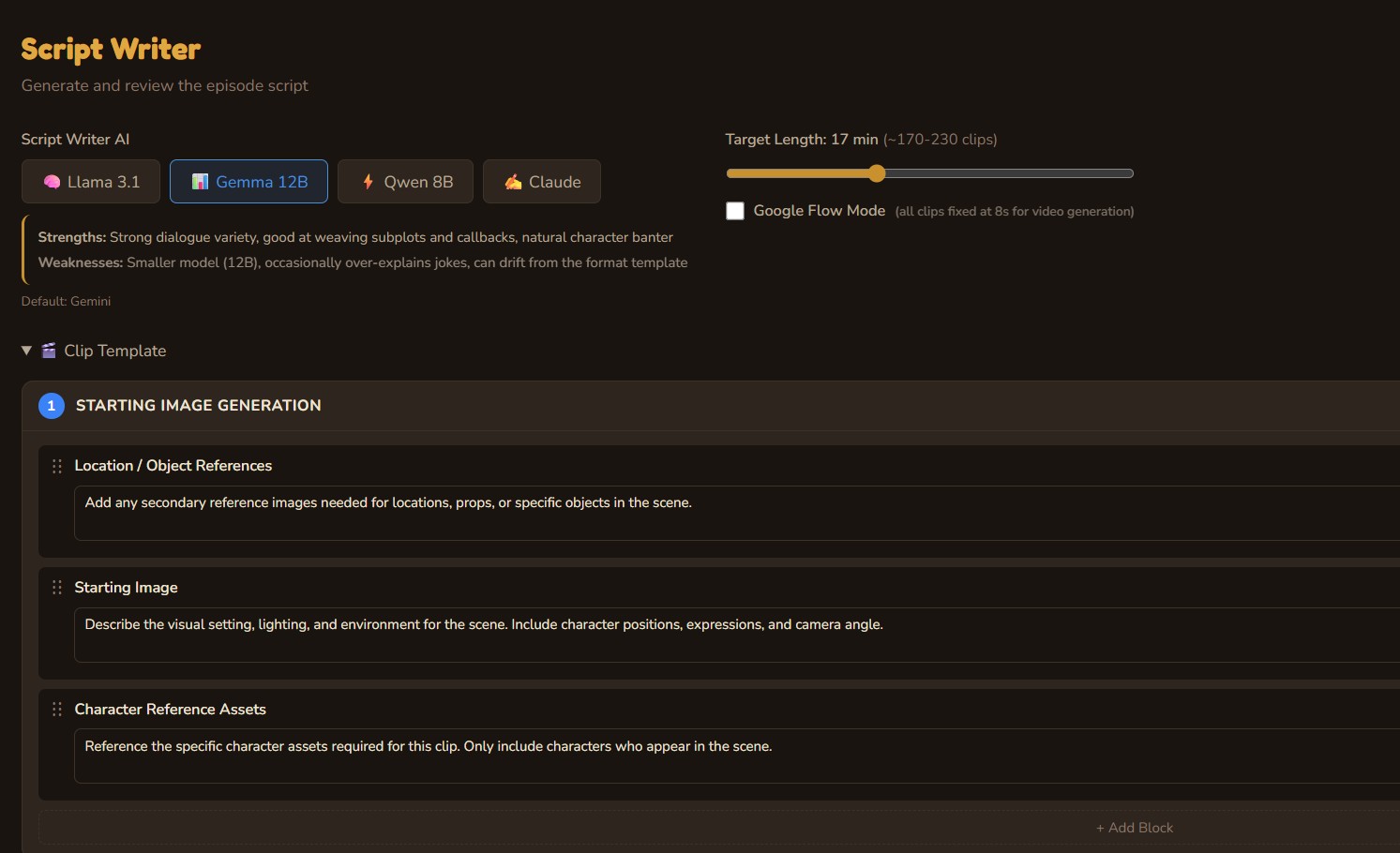

Script Writer

Choose your LLM — Llama, Gemma, Qwen, Gemini, or Claude — and generate a full episode script with structured clips, character dialogue, scene descriptions, and image prompts. The writer is trained on the show bible: a living knowledge base that evolves with every episode, ensuring character consistency and avoiding repeated plotlines.

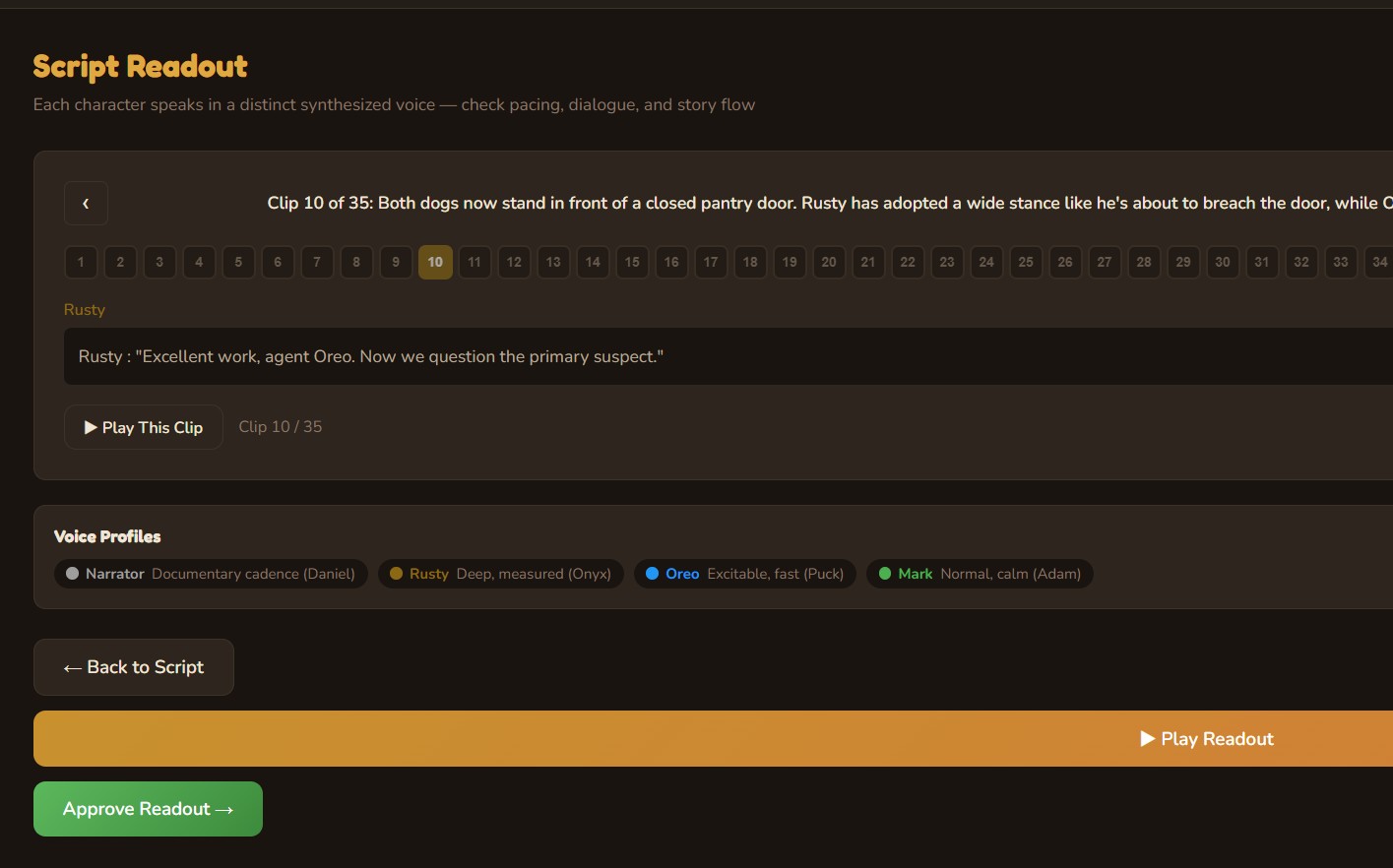

Voice Readout

Every character speaks in a distinct synthesized voice. The narrator delivers a documentary cadence; Rusty speaks with deep, measured authority; Oreo is excitable and fast. Play through the full episode readout to check pacing, dialogue flow, and story structure before committing to visual production.

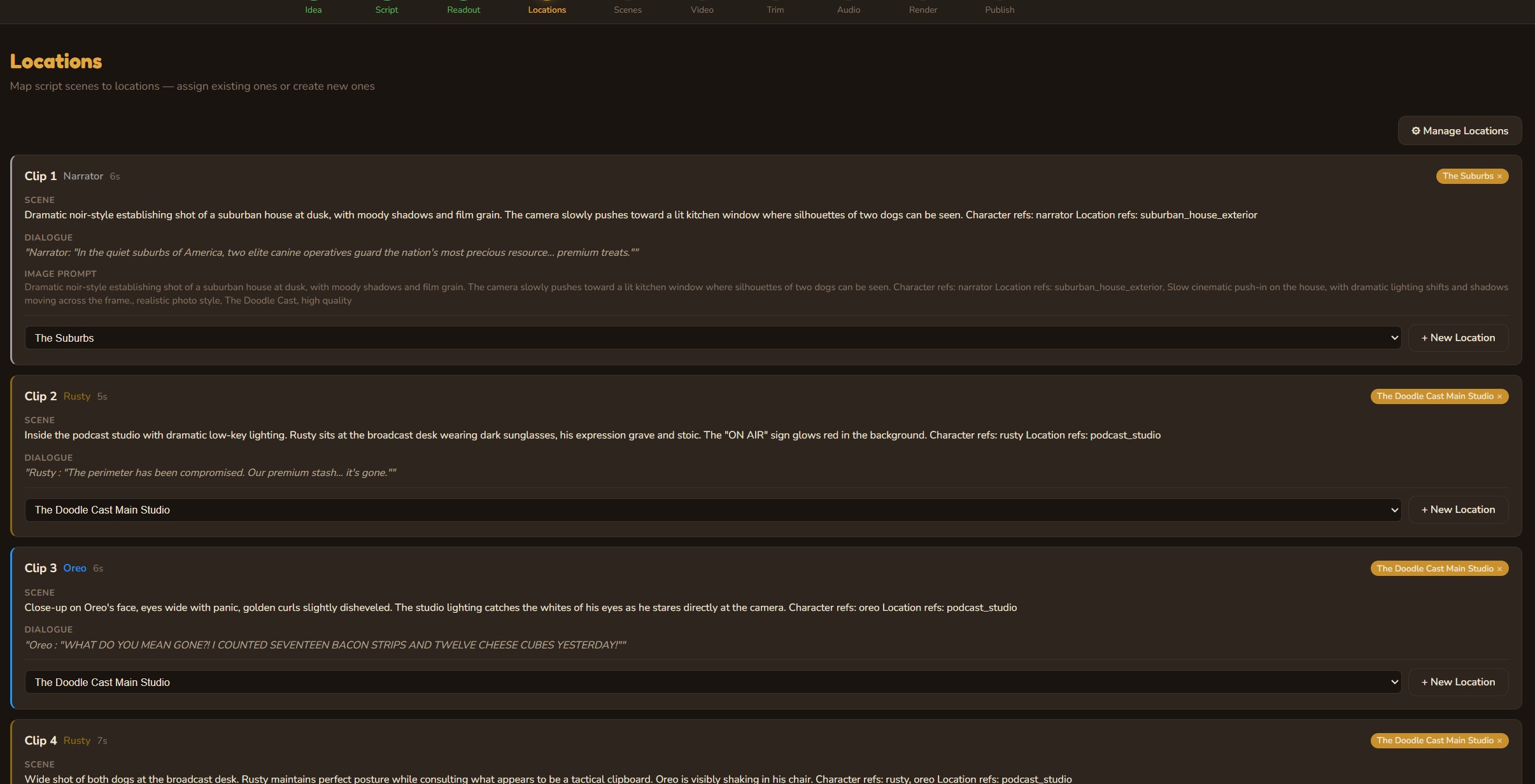

Location Mapping

AI extracts every location from the script and maps them to clips. Build a reusable location library with reference images, visual descriptions, and default prompts. Locations carry their visual identity across episodes — the studio always looks like the studio.

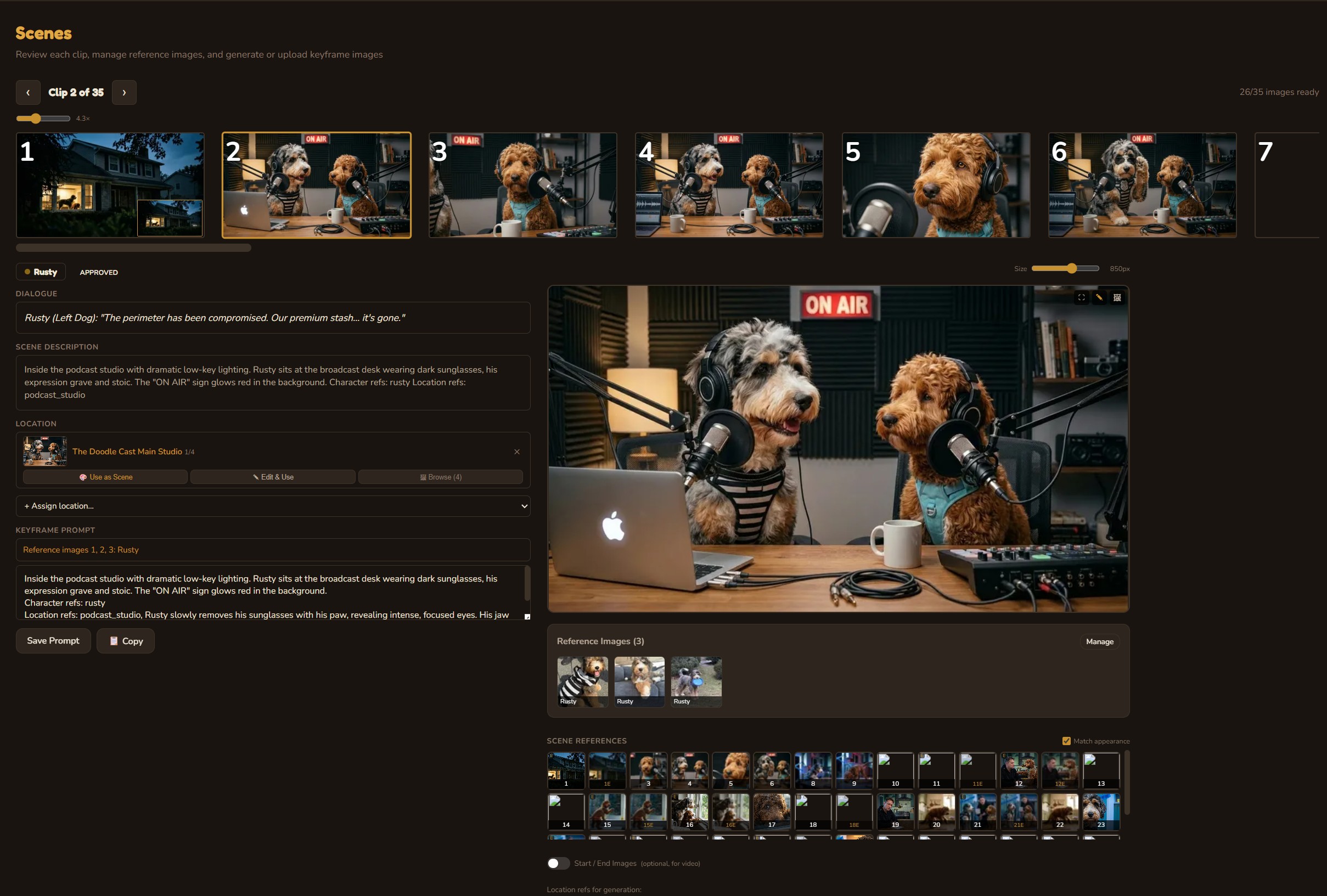

Scene Generation

Generate photorealistic images for each clip, informed by character reference sheets, location images, scene references, and scene descriptions. Every generation considers the visual context — character appearance, location lighting, camera angle — to maintain consistency across 30+ scenes. Start and end images for each clip enable smooth I2V video generation. Full image history with undo, AI-assisted editing, and Google Flow mode for iPad/PC-sourced photos.

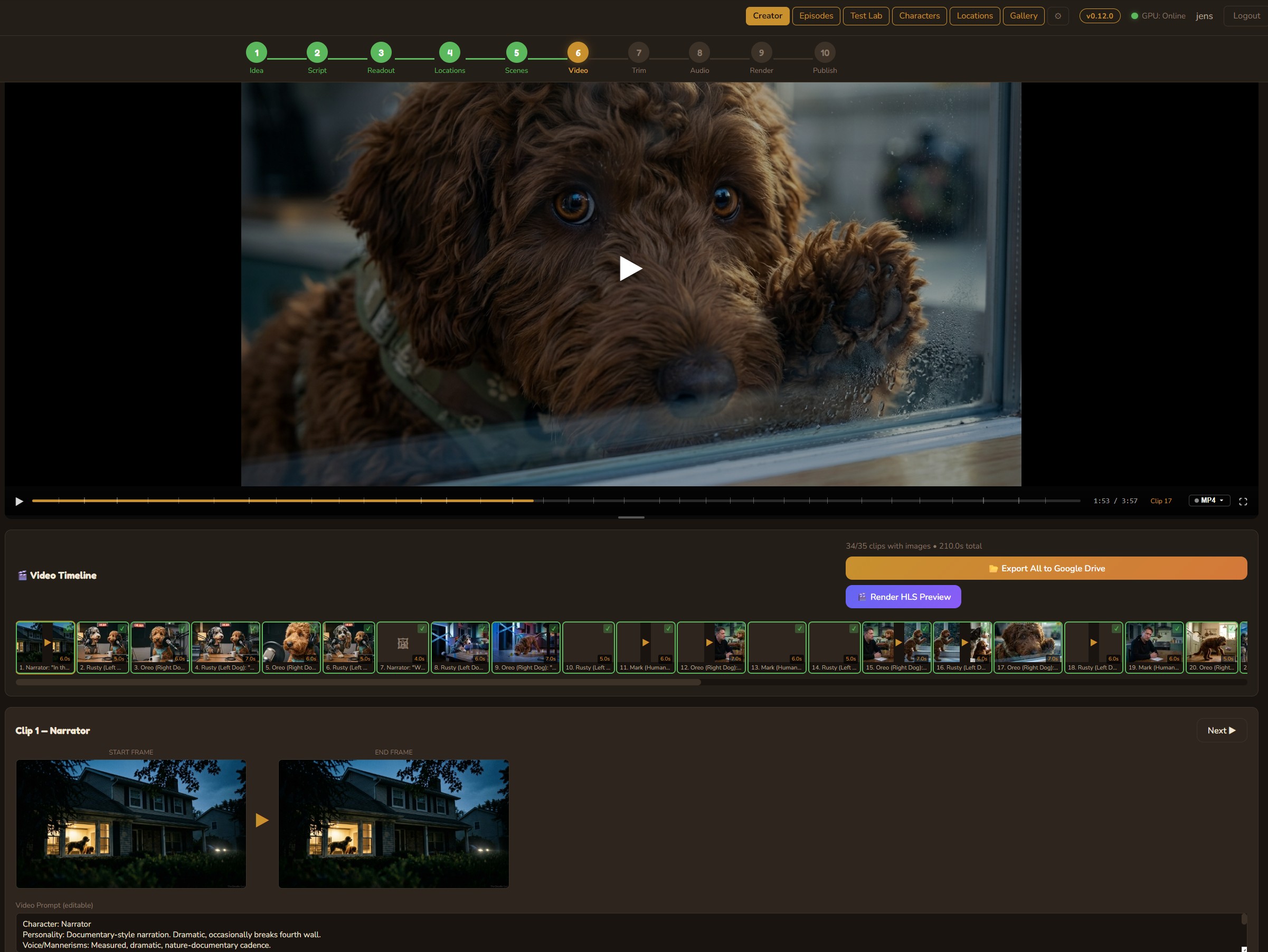

Video Generation

Transform scene images into motion using cloud models like Google Veo or local open-source models (WAN 2.2, LTX 2.3) on an RTX 5090. The DaVinci Resolve-style timeline shows every clip with start/end frames, status badges, and a composite episode preview player that sequences all completed clips in real time.

Trim Editor

Fine-tune every clip with frame-accurate trim points. Set in/out markers, adjust clip durations, and preview the result instantly. The trimmed timeline carries forward to the audio mix and final render.

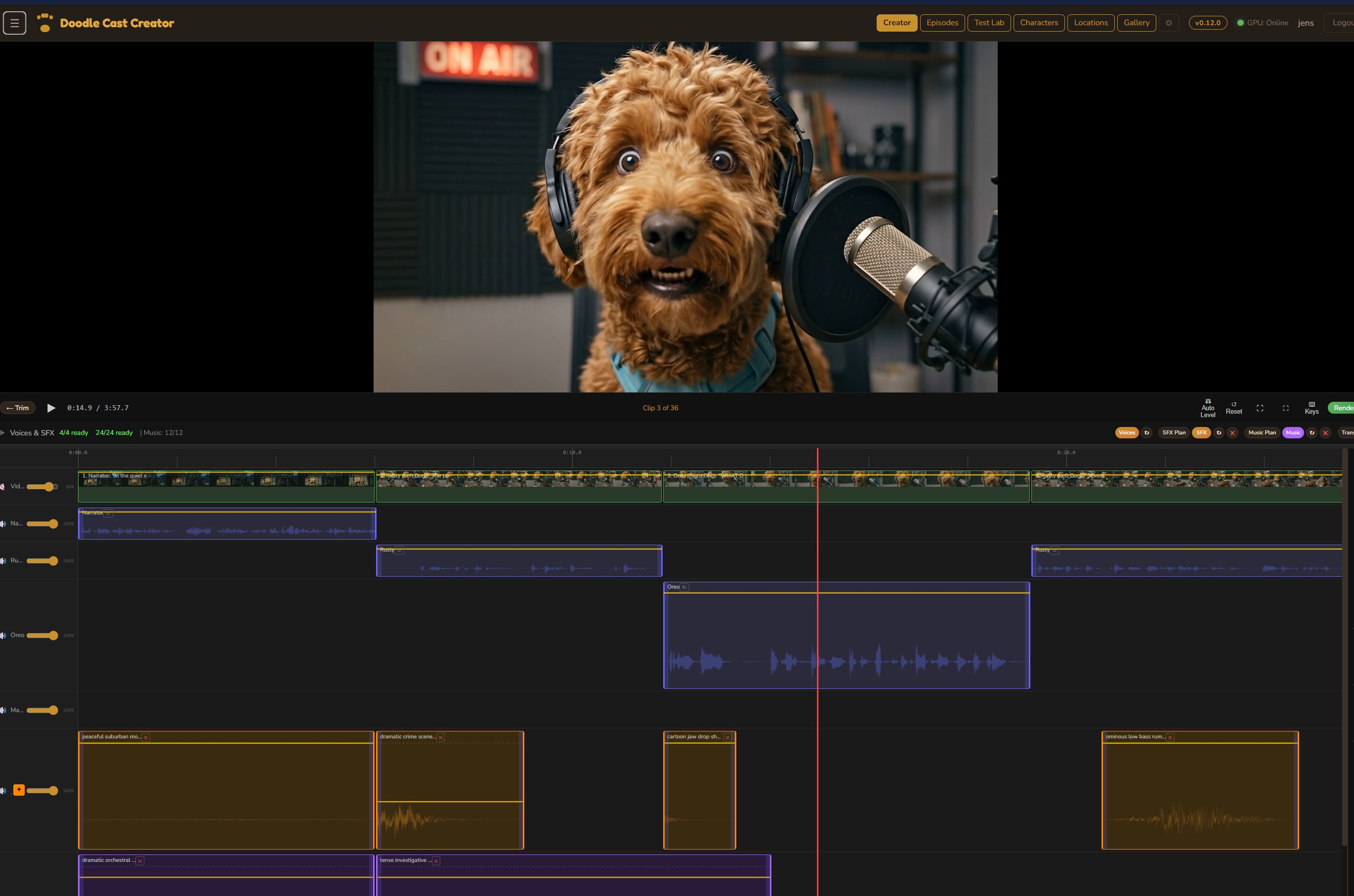

Audio Mix

A full multi-track audio editor with four lanes: background video audio, voice dialogue, sound effects, and music. Each track has independent volume control with keyframe automation. Generate SFX and music from text descriptions, position them on the timeline, and fine-tune the mix — all inside the browser.

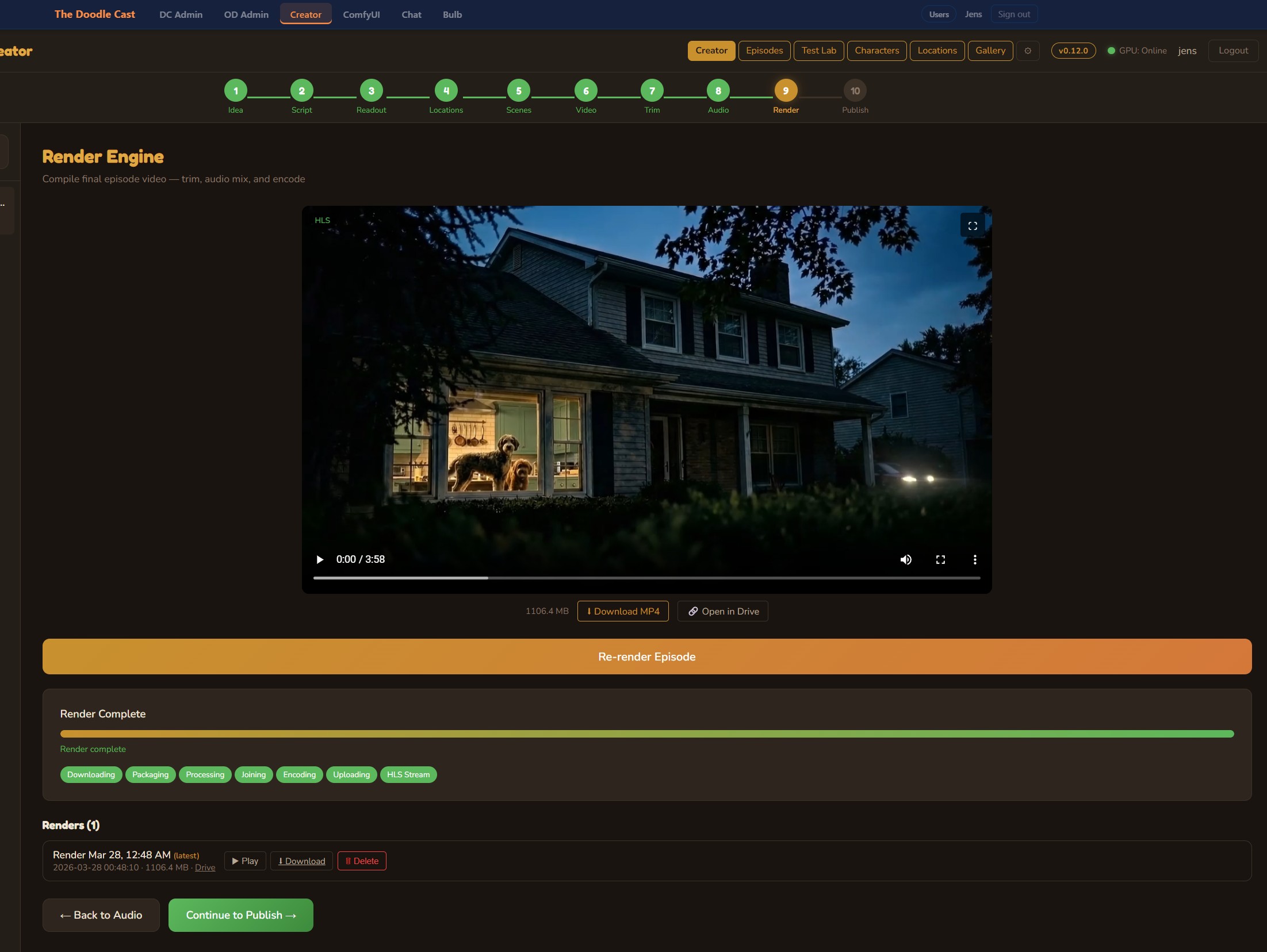

Render Engine

One button, full episode render. The engine trims each clip, applies the complete audio mix with ffmpeg filter graphs, concatenates everything (including the outro), and encodes the final MP4. When the RTX 5090 is online, encoding runs on NVENC for speed; otherwise, VPS CPU fallback handles it. Output goes straight to Google Drive.

Publish to YouTube

Generate multiple AI thumbnails for A/B testing, write metadata with Claude, set tags and categories, then publish directly to YouTube — with real-time upload progress. The show bible automatically evolves after each published episode, learning what works for future content.

Everything a studio needs, built in

Beyond the 10-step pipeline, the Creator includes a full suite of persistent production tools that carry knowledge across episodes.

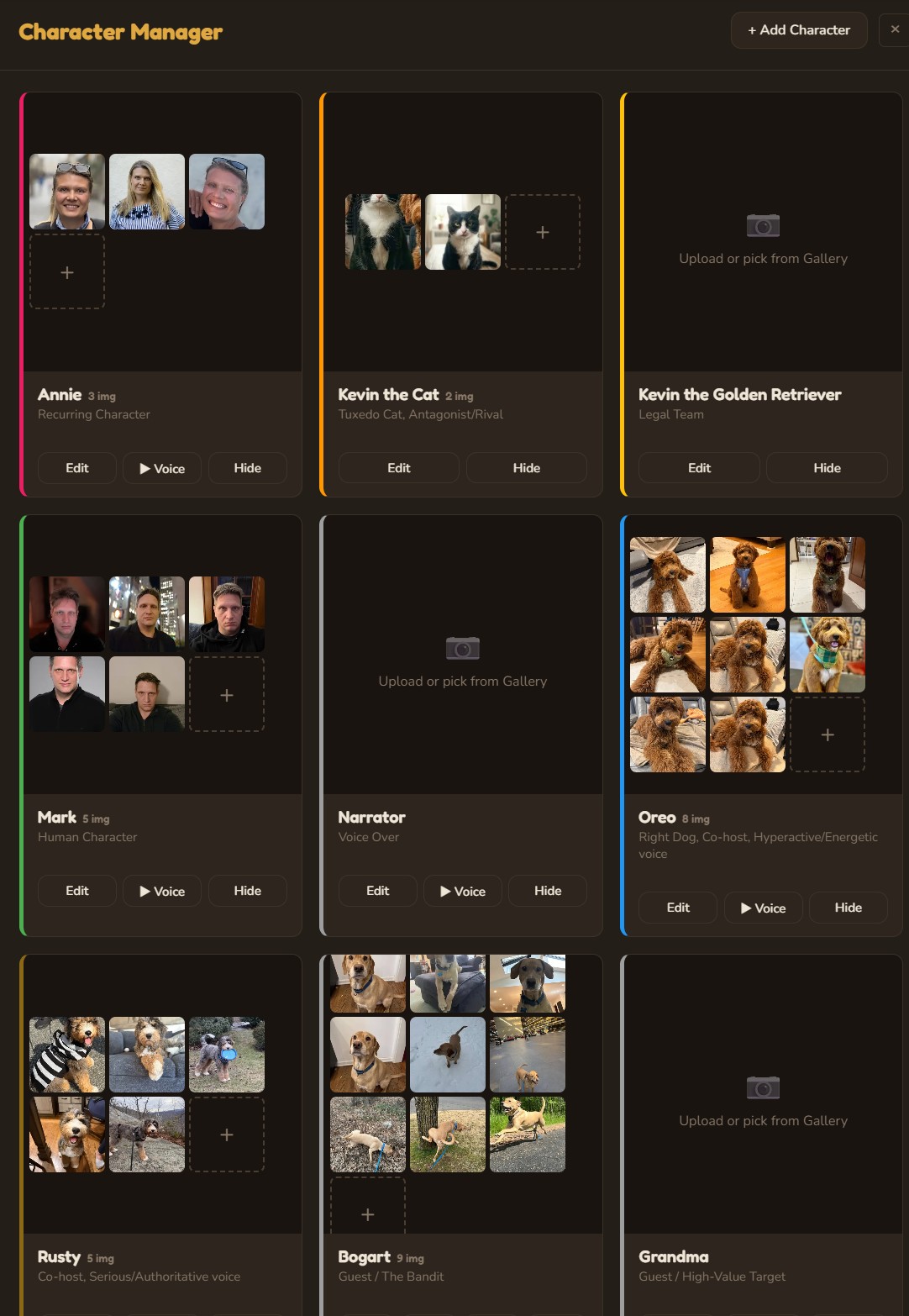

Character Manager

Define characters with role, personality, visual description, and speech style. Upload reference images for consistent AI generation. Assign ElevenLabs voice profiles with preview playback. Characters persist across all episodes and inform every AI generation.

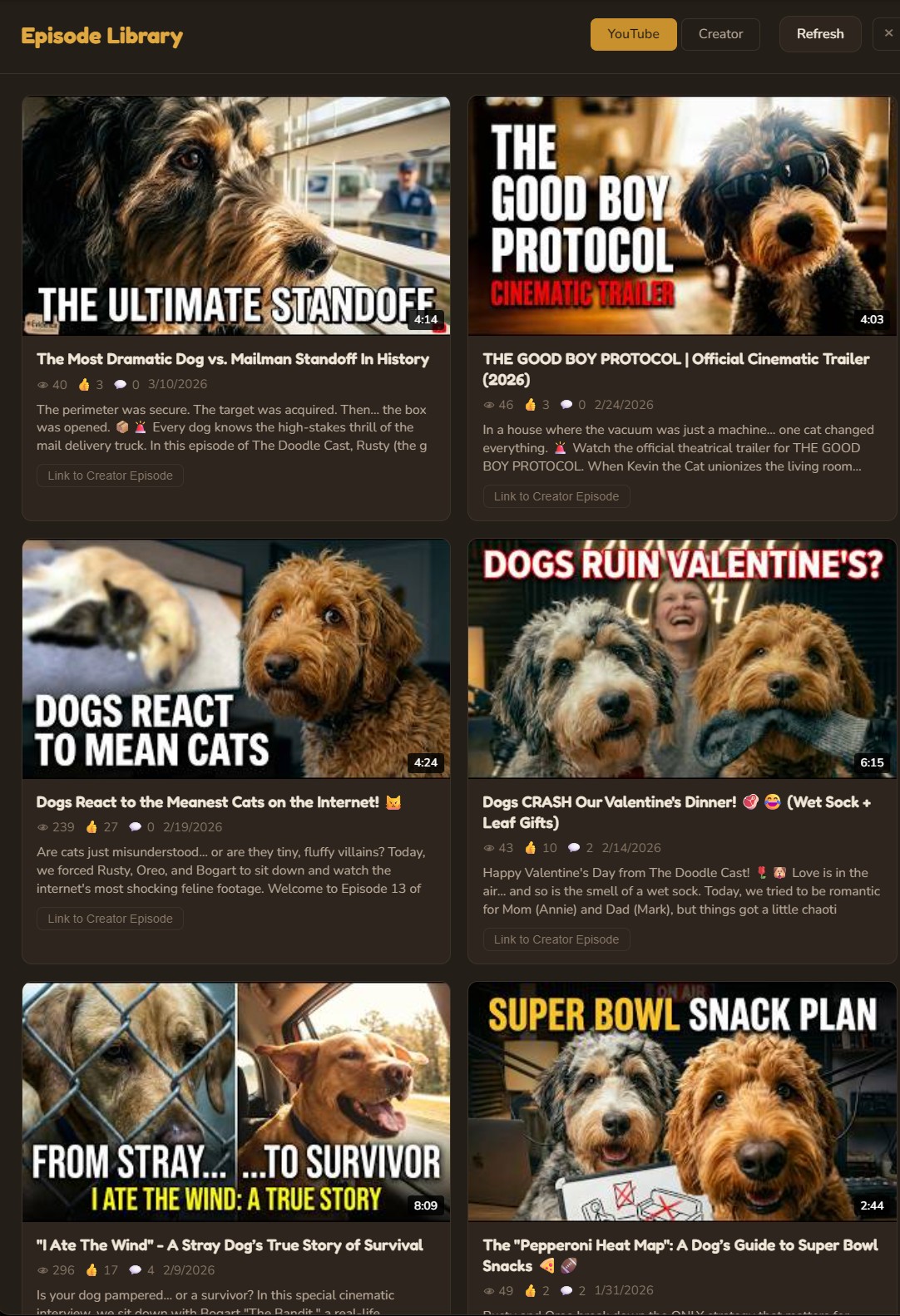

Episode Library

A complete production dashboard showing every episode across all stages of development — from draft ideas to published videos. Filter by status, search by title, and jump directly into any production step.

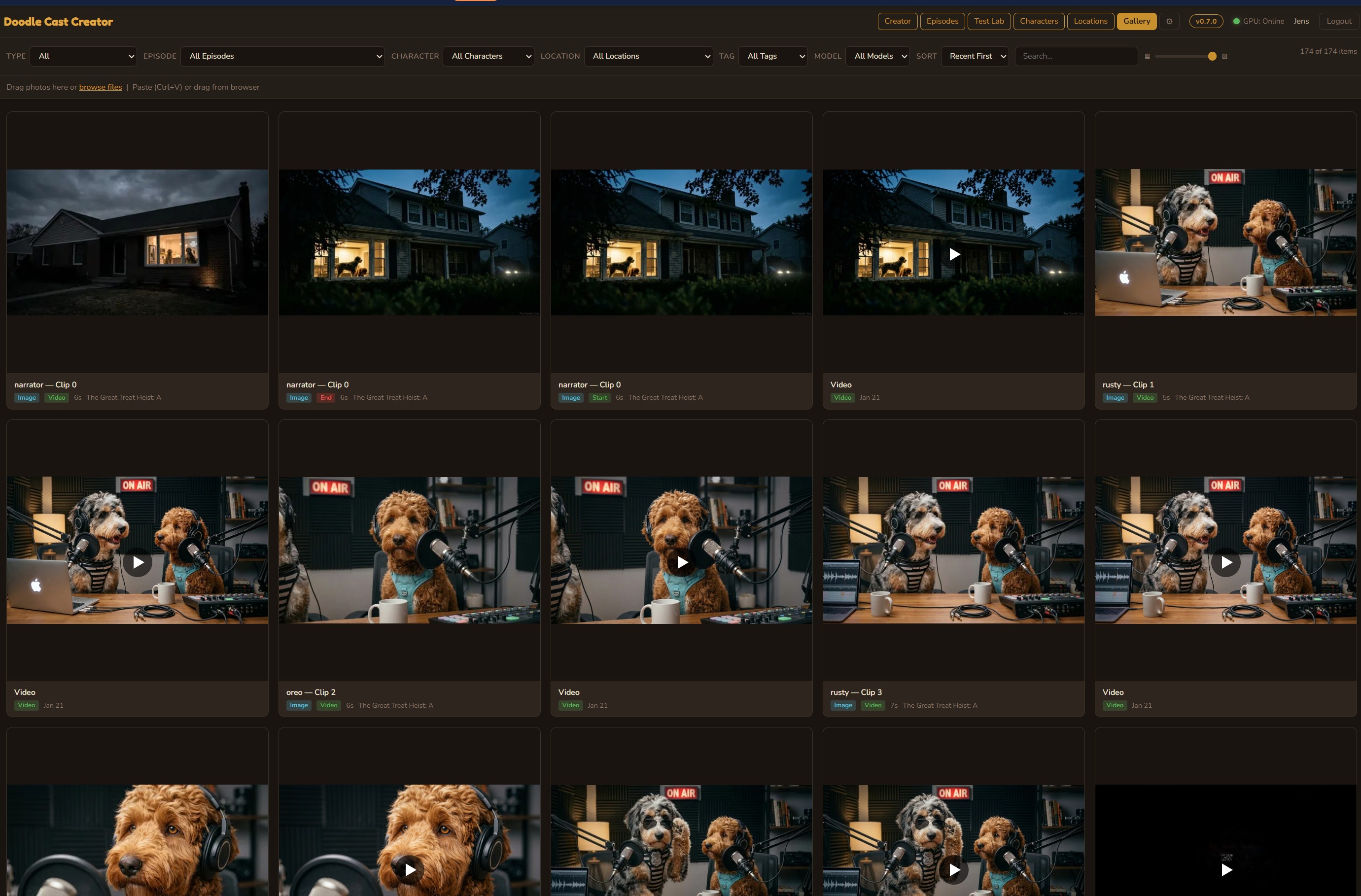

Media Library

Centralized asset management for every image and video across all episodes. Browse by model, date, or episode. Drag-and-drop upload, crop, rotate, and adjust — all with full undo support.

YouTube Shorts Production Pipeline

A complete parallel pipeline for producing batches of vertical short-form content. Generate 8 shorts at once from a single theme, each with unique characters, dialogue, AI-generated images and video, voice synthesis, and music — then publish across five platforms with scheduled auto-publishing.

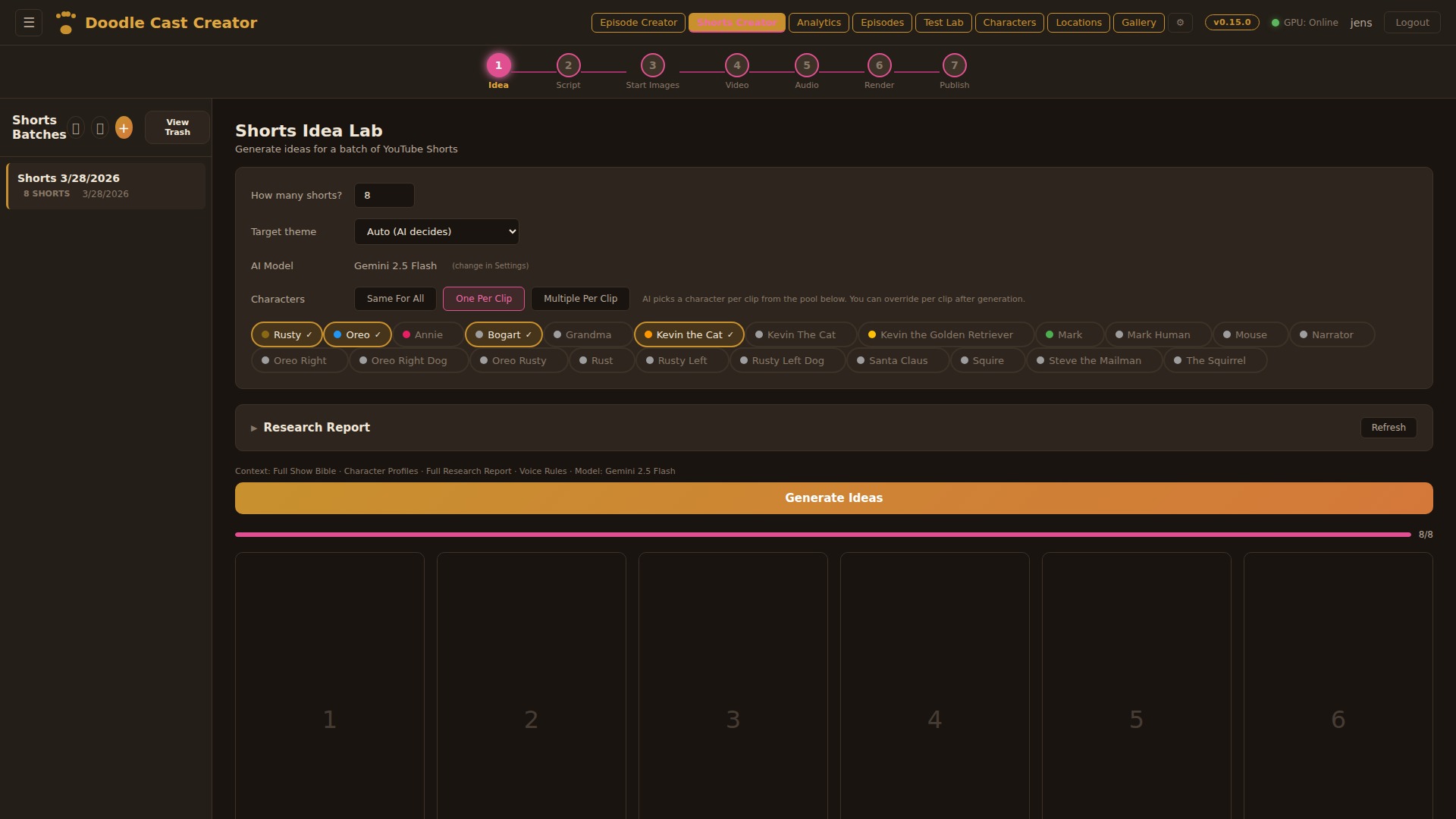

Idea Lab

Generate up to 8 short ideas at once from the show’s character pool. Choose the AI model (Gemini Flash, Llama, Gemma, Qwen, or Claude), assign characters per clip or let the AI decide, and feed in a YouTube research report for topical relevance. Each idea gets a title, hook, concept, and character assignment — all in one batch generation.

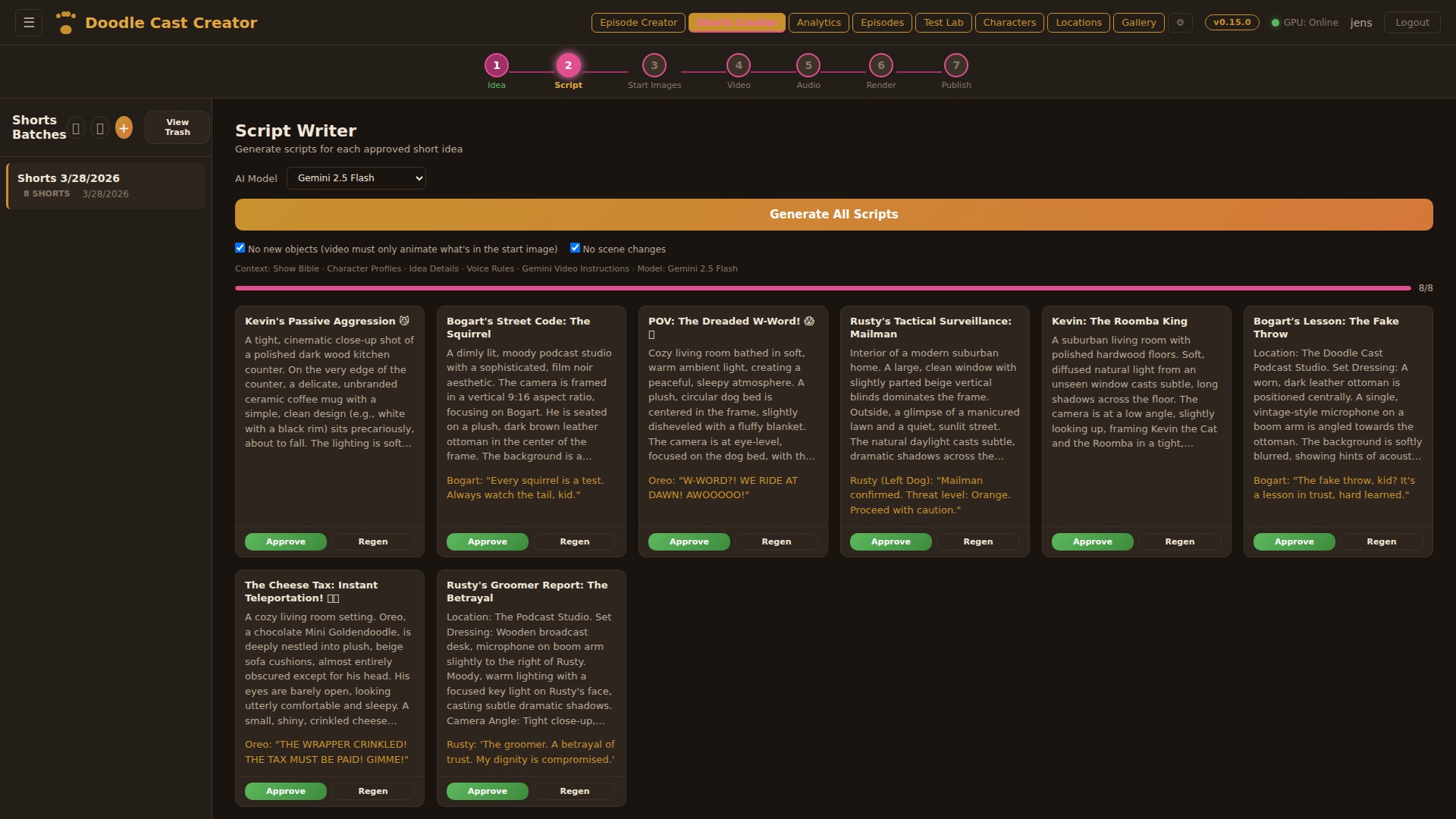

Script Writer

Generate scripts for all clips in batch. Each script includes a scene description (for the still image), character description, video action prompt (for I2V animation), and 8–12 word dialogue. Rules enforce no new objects mid-scene and no scene changes — keeping each short visually coherent for image-to-video generation.

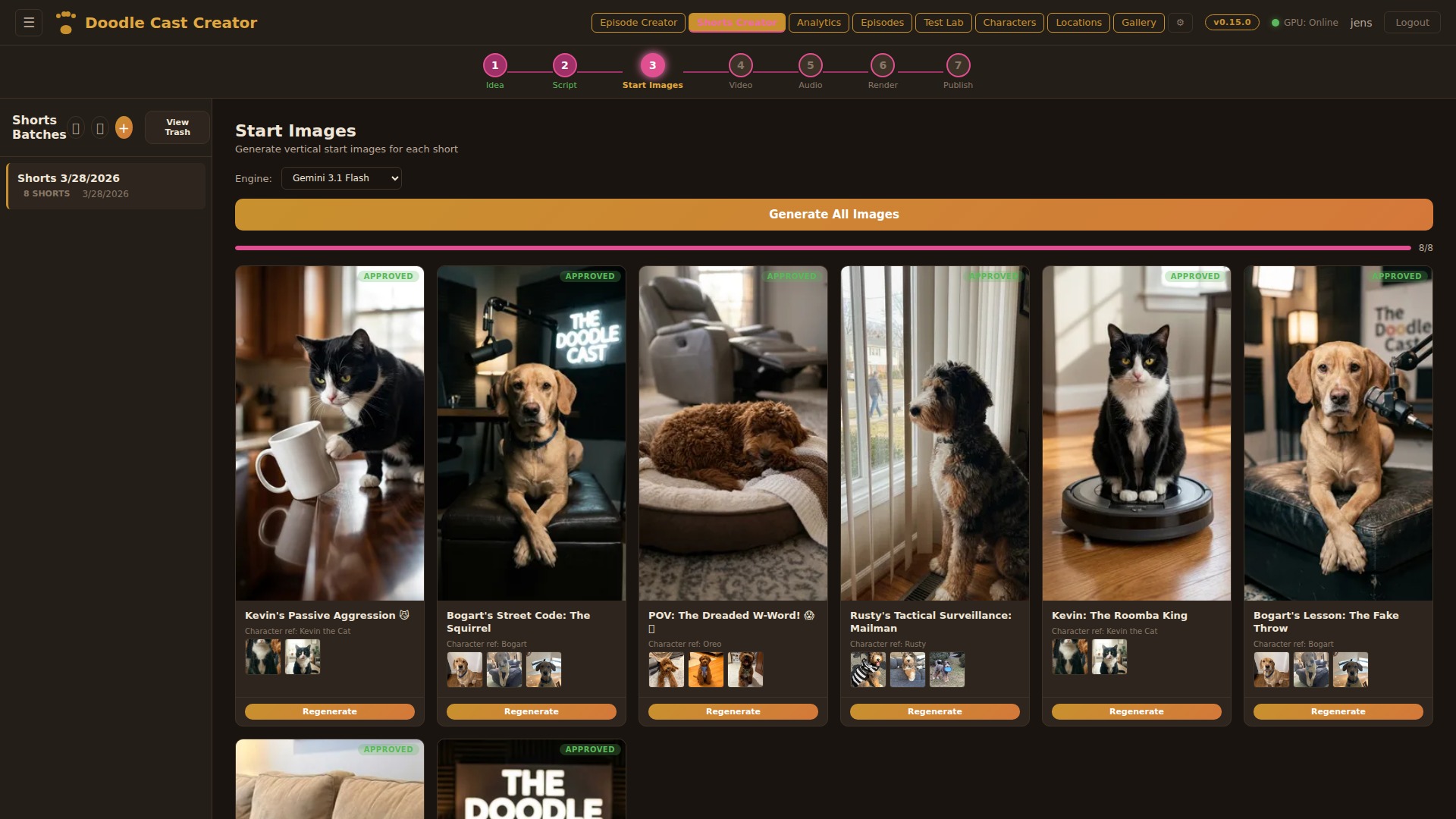

Start Images

Generate the starting frame for each short using AI image models. Choose between Gemini, Z-Turbo, SDXL, or Qwen — each producing photorealistic 9:16 vertical images informed by character reference sheets and scene descriptions. Full image history with undo and per-clip regeneration.

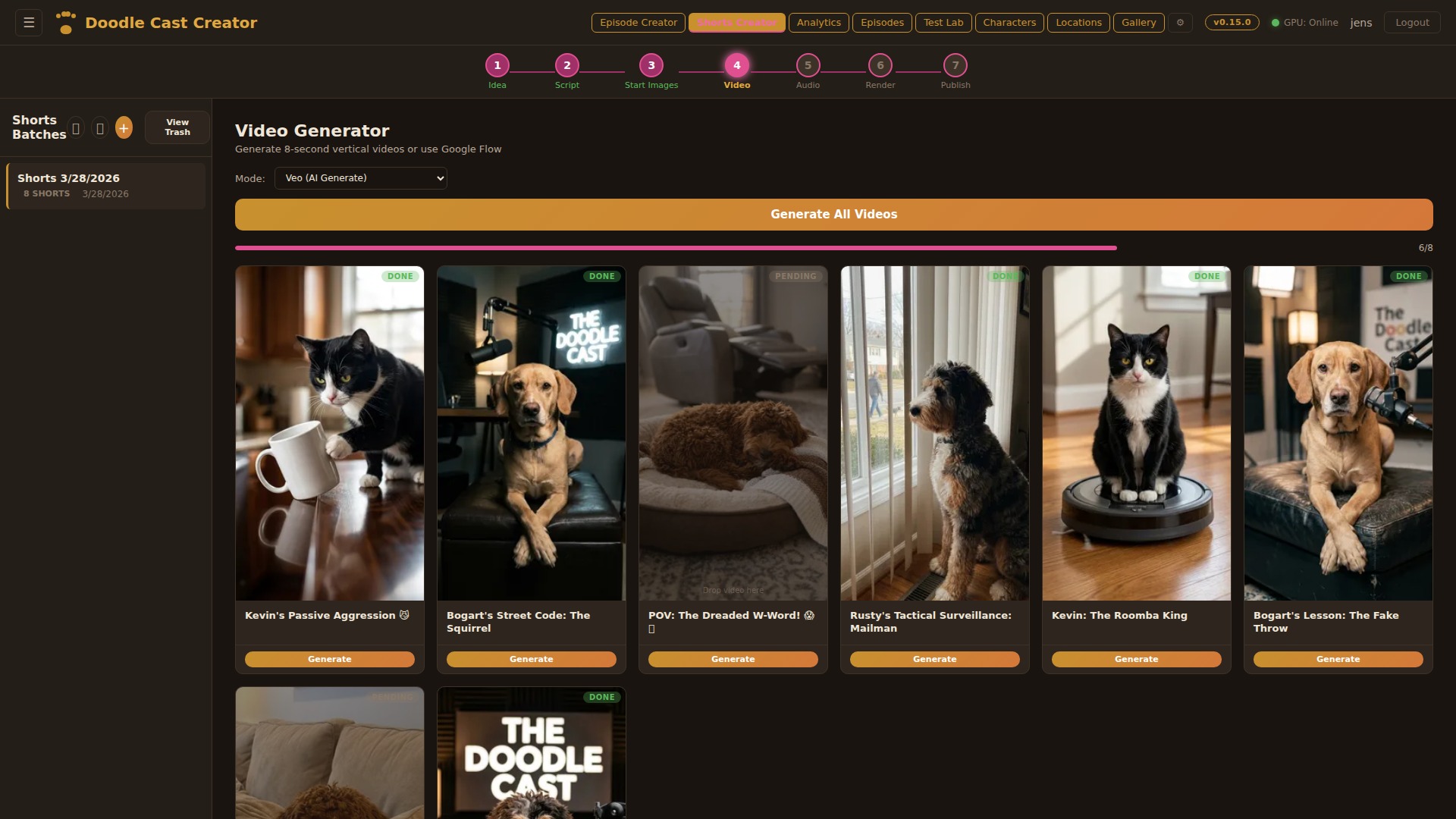

Video Generation

Transform each starting image into an 8-second animated clip using image-to-video models. Google Veo for production quality, or WAN 2.2 / LTX on the local RTX 5090 for free iterations. The video action prompt from the script drives the animation — subtle movements, camera pans, character expressions.

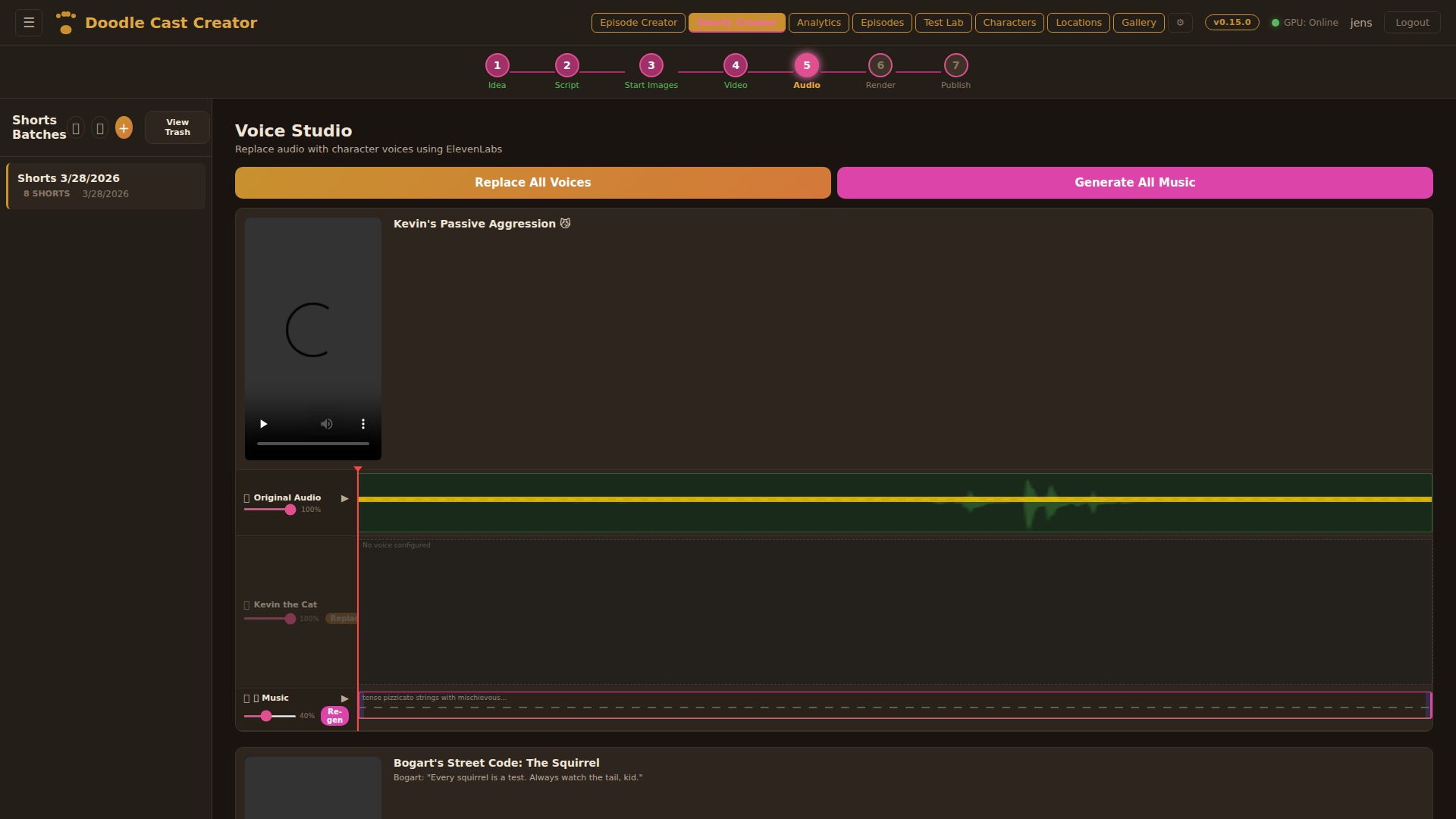

Voice Studio

Replace audio with character voices using ElevenLabs. Each short gets a multi-track audio view: original video audio, per-character voice tracks, and AI-generated background music. Batch-replace all voices with one click, or fine-tune individual clips. Music generation creates custom background tracks that match each short’s mood.

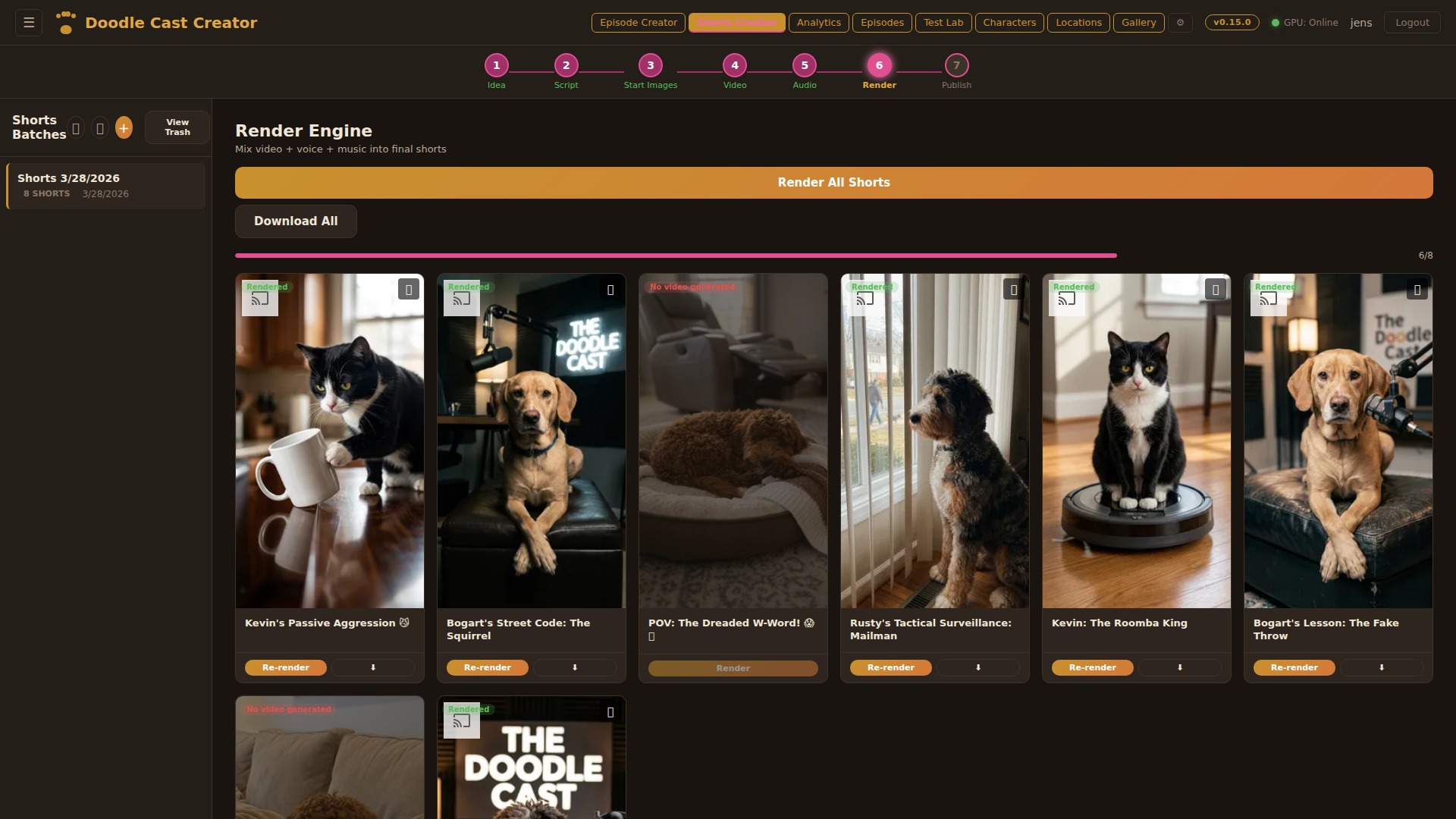

Render Engine

Mix video, voice, and music into final MP4s using ffmpeg. Each clip gets independent volume control for original audio, voice, and music tracks. Render all 8 shorts in one click or selectively re-render individual clips. Output uploads to Google Drive automatically and is available for immediate download.

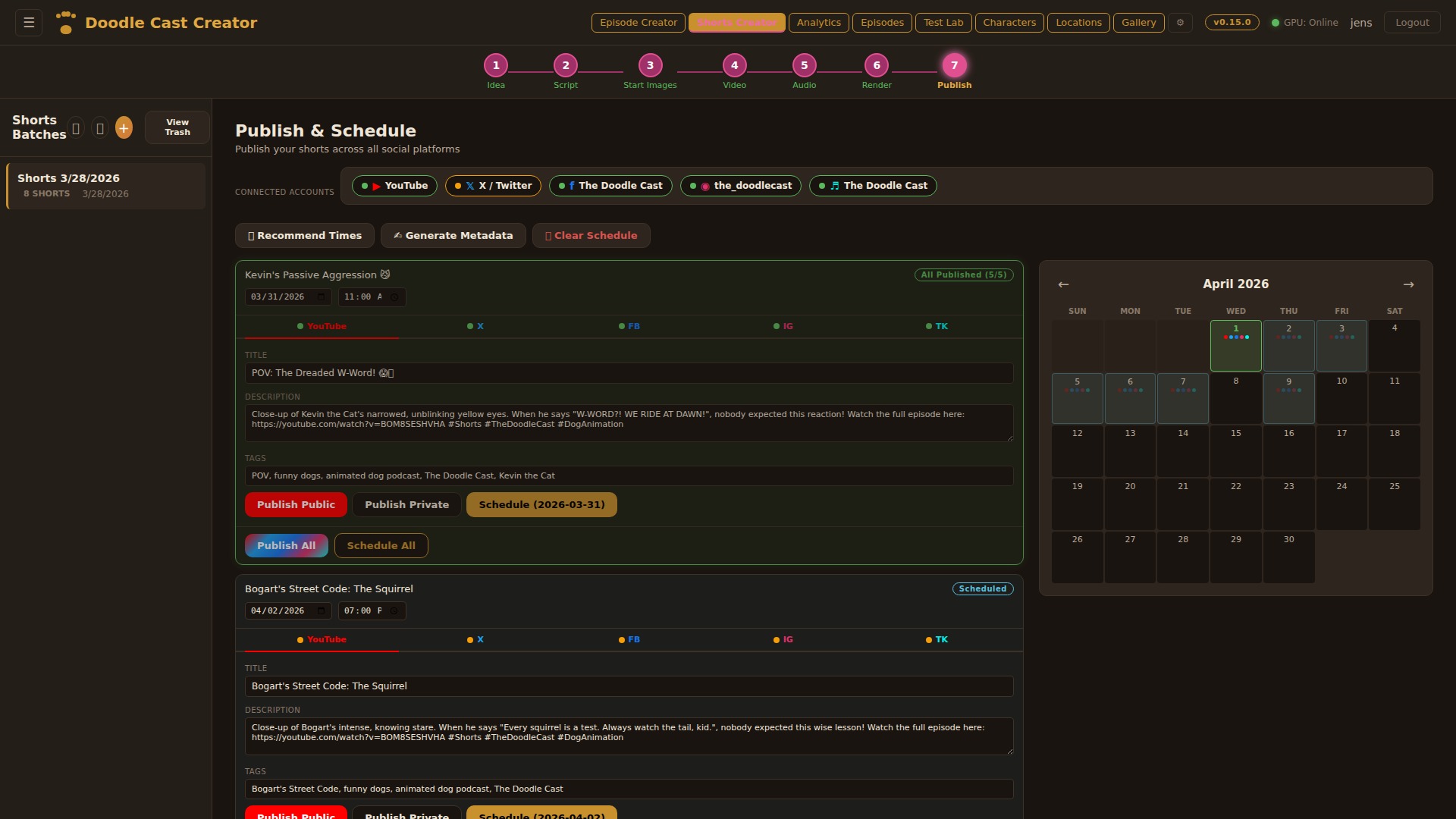

Publish & Schedule

Publish shorts to YouTube, Facebook, Instagram Reels, TikTok, and X (Twitter) from a single dashboard. Each platform has its own tab with OAuth authentication, AI-generated metadata, and publish controls. Schedule posts with a visual calendar, get AI-recommended posting times based on channel analytics, and let the auto-publisher fire at the right moment. Missed schedules trigger email alerts.

Podcast Creator

A complete 7-step audio-only production pipeline. Generate conversational podcast episodes where the show’s characters discuss real topics — with multi-voice dialogue, AI-generated cover art, and direct publishing. Perfect for building a complementary audio feed alongside the video channel.

Podcast Idea Lab

Three modes: AI-recommended pitches with Grok scoring, bring your own idea, or Research & Debate with live web research. Scripts include multi-character dialogue with ElevenLabs voice directions and configurable target length (2–120 minutes).

Multi-Character Script

Full conversation scripts with character dialogue, word count tracking, and duration estimates. Choose your LLM (Grok 4, Gemini, Claude, or local models) and generate scripts grounded in the show bible for character consistency.

Script Preview & Publish

Full episode preview with character avatars, color-coded dialogue, and a sidebar listing all podcast episodes. Review the complete conversation flow before committing to voice generation and audio production.

Multi-Voice Dialogue

ElevenLabs Text-to-Dialogue API generates natural conversations between multiple characters in a single audio stream. Each character maintains their unique voice profile with proper conversational pacing and turn-taking.

Audio Production

Auto-generated intro/outro music, configurable silence gaps, act-based script batching for long episodes (15+ minutes), and target duration control. The pipeline produces publish-ready audio without manual editing.

AI Cover Art

Generate up to 4 cover art variants per episode using Gemini, informed by character reference images and episode context. Pick the best one or regenerate.

7-Step Pipeline

Pitch → Script → Voices → Cover Art → Audio Mix → Preview → Download & Publish. Each step builds on the last with full state persistence across reloads.

Fire Hydrant Gazette — Late-Night News Comedy, Reimagined

A complete news-desk segment system inspired by classic late-night news comedy. Extensible segment templates define format rules, comedy mechanics, joke formulas, and visual style — all baked into the AI script generation.

📰 Segment Templates

Extensible segment type system. Each template (e.g. The Fire Hydrant Gazette) has its own format rules, comedy mechanics, 12 joke formulas distilled from classic news-desk comedy, voice profiles, guest correspondent arcs, and a dedicated comedy bible layer. Templates are code-canonical and upsert on boot.

🎬 OTS News Graphics

Character-positioned over-the-shoulder graphics, matching real news-desk broadcasts. Rusty sits left → OTS on the right. Oreo sits right → OTS on the left. Configurable X/Y/Width per segment type with live-preview sliders. 4:3 landscape ratio. Composited in the final render via ffmpeg overlay filter on both VPS and GPU paths.

🎧 Audience Reaction Track

Comedy segments get per-template audience reactions — 9 types (laughter, big_laugh, giggle, applause, ooh, aww, groan, cheer, signoff) tuned to an SNL Weekend Update dry-desk profile. Dedicated Audience Plan step, density dial, multi-take stem library, lane-packed timeline, signoff-into-outro bleed. Full deep dive in the workflow article.

🎤 Per-Segment Intro Clips

Each segment type can have its own intro video (uploaded in Settings, toggle on/off). Appears in the timeline with a cyan border, included in HLS, VPS fallback, and DaVinci export render pipelines. Cache hash includes intro for invalidation.

📡 Discord Auto-Announcements

New episodes, shorts, gazette articles, and podcast episodes are automatically posted to the fan Discord server via an internal HTTP announce API. Non-blocking hooks — publish failures never block the response. 6 distribution channels now: YouTube, Facebook, Instagram, TikTok, X/Twitter, and Discord.

🖼 Scene Image Enhancements

Gallery picker on empty scene slots. Drag-to-copy images between clips in the timeline (chain-draggable). Per-clip topic image toggle (show/hide OTS graphic). Image history with undo. Topic images visible across scene thumbnails, video timeline, and start/end frame previews.

🎭 Comedy-First Script Instructions

Rewritten comedy bible based on research from professional late-night comedy writers. Kill-your-first-thought rule, write-30-keep-5 method, punchlines-pivot-away principle, factual setups, and tight two-line joke structure. Static camera instruction for news desk realism.

📷 Real Web Photo Picker

Brave Image Search API for sourcing real news photos as OTS graphics. Choose between AI-reimagined versions or real photos as-is. Photo credit metadata captured for on-screen attribution. Replaced DDG scraper (ToS violation).

Studio 8H in a database — the audience reactions pipeline

The Fire Hydrant Gazette is a news-desk comedy segment. News-desk comedy needs an audience — but not a sitcom laugh-track audience. The reactions layer is modelled on the SNL Weekend Update dry-desk feel: the audience sits silent while the anchor delivers, then lands one clean wave in the post-speech silence. Implementing that took a script-writer gate, a multi-model planner, a multi-take stem library, a 9th “signoff” reaction that bleeds into the outro, and a full audio editor with lane-packed timelines.

🎪 Weekend Update profile

Reactions land in post-speech silence only — never mid-speech, never during word-gaps. One reaction per clip maximum. Target mix: laughter ~45%, groan ~15%, ooh ~15%, applause ~10%, big_laugh ~8%. Desk lines, not sitcom stings. Locked in the prompt as hard rules.

🎸 9 reaction types

laughter · big_laugh · giggle · applause · ooh · aww · groan · cheer · signoff. Duration clamped 0.3–6s at insert time. Extended types (chuckle, snort, gasp, mmm) kept in reserve for texture, not mid-speech spam.

🎱 Density dial

Sparse (25–35% of comedy clips), medium (50–65%), dense (75–90%). Persisted per-episode in audio settings. Controls SHARE of clips that get a reaction — not stacking depth. Stacking is forbidden.

💻 Multi-take stem library

Each reaction type has N flavor descriptors (laughter: 8, applause: 6, bed: 6). Same cue deterministically picks the same variant via seed hash, but different rows spread across the variant pool. Kills the canned laugh-track feel.

🔊 Natural tail bleed & pitch roll

Non-bed reactions play 2s past clip boundary so tails feel present. ±3% playbackRate roll per play for pitch+speed variety. Bed tone exempt from both — a continuous low-gain studio room tone loops under the whole episode at ~0.12 gain.

🎧 Audience Plan step

Dedicated button (separate from auto-script-gen). Transcribes the episode on the local GPU host, then asks an LLM — a local model first, Claude or Gemini as fallbacks — to place reactions on the trimmed timeline. Additive: preserves existing non-bed rows.

📥 Signoff into outro

A 9th reaction type that carries the goodbye past the final clip into the outro logo. 18s dedicated slot, broadcast-loud sustained applause+whoop+cheer blend. Outro re-encoded via ffmpeg amix with 0.2s fade-in + 2s fade-out at t=4s.

🧠 Studio 8H acoustics

Every stem prompt locked to a shared acoustic descriptor: ~285-seat NBC Studio 8H, woven linen ceilings, intimate dry acoustics, close broadcast house mic. No reverb tail. No individual voices popping. TV-behaved collective reactions only.

📈 Lane-packed timeline

Overlapping reactions split into extra visual lanes in the audio editor (greedy interval-scheduling). Lane 0 keeps mute/volume controls; extra lanes get a “↓ Audience N” label. Same pattern now applied to SFX and music tracks.

Further reading: The full workflow — from script-writer cue generation through the Audience Plan LLM chain, stem catalog, render pipeline integration, and the outro-bleed trick — is written up in the Audience Reactions Workflow article on overdigital.ai.

Anti-slop hardening, retention feedback, and locking down what’s already live

By April we’d shipped 24 pipeline steps. The next round wasn’t new pipelines — it was hardening the existing ones. YouTube had started actively penalising detected AI content (~5× less traffic per the Search Engine Journal study). At the same time, scheduled and published clips were occasionally getting accidentally edited or regenerated, breaking what was already live. v1.5 is the round that fixed both — channel-defense on one axis, editing-safety on the other.

🛡️ Universal anti-slop hardening

Every image-generation call — characters, locations, podcast covers, topic images, scene references, saved-idea portraits — runs through the same shared anti-slop instruction set. Forbids the visual signatures YouTube’s detector pattern-matches on (uniform shading, plastic skin, neon-rim glow, generic-fantasy lighting). Real-photo character references preferred over generated ones where possible.

📢 Burned-in captions

ASS subtitle file built from clip dialogue with timing pulled from the trimmed audio track. Baked into the render via ffmpeg’s subtitles filter (forces re-encode — can’t pass through with stream copy). Captions survive every cross-platform repost; no more relying on YouTube’s auto-captions.

📊 Retention feedback loop

Per-second YouTube retention curves (the 100-bucket audienceWatchRatio array) pulled from the YouTube Analytics API and stored locally. A channel-aggregation service rolls the curves into a prompt block that gets injected into the next round’s idea-generation and script-generation calls (“avg drop at 4s — tighten dialogue at the second beat”).

🗐️ Static-pose start frames

Start-frame prompts now lock the character pose so the end frame can move. Kills the “swimming character” look where image-to-video models interpolate between two slightly-different stances. Theme propagation also runs through idea-generation, script-generation, and script-regeneration so the chosen target theme actually shapes every stage instead of just the first.

📅 Top-level calendar

Month-grid view of every scheduled and published short and episode across all platforms. Drag-drop to reschedule, drill-down per-clip with retention curve SVG, hover preview with player controls, fullscreen video. Reachable from every page so the calendar icon is always one click away. Cancel/unschedule from inside the drill-down without bouncing out to the publish step.

🔒 Lock for editing

Once a clip is scheduled or published it’s locked. UI gates and server-side guards refuse mutations on schedule, metadata, platforms, schedule-all, and image regeneration unless the batch is explicitly unlocked. The unlock flow is single-session and explicit, so you can’t accidentally re-render last week’s short.

📅 YouTube native scheduler (opt-in)

Two publish modes coexist. Default: the in-app auto-publisher uploads at the scheduled time as a public video. Opt-in: per-clip “Schedule on YT” or batch “Schedule All on YouTube” uploads as private with a future publishAt, so YouTube owns the queue server-side and the clip shows in YT Studio’s scheduled list.

🎤 Voice summary field

A new tight 1-2 sentence voice description per character — used in image-to-video prompts and the export instructions. Falls back to the longer speech-style guidance when not set, so existing characters keep working. Long speech-style guidance no longer dilutes a focused video prompt.

⏳ Fire-and-poll for long jobs

Drive exports, batch retention pulls, and other long-running operations switched from synchronous requests to a fire-and-poll job pattern. Cloudflare’s free tier kills synchronous requests past ~100s, breaking the original flow. The job pattern starts work, returns a job id, and the UI polls until done.

Why this matters: Most AI-generated YouTube content is bleeding traffic to YT’s detector while authors keep regenerating into already-live clips. v1.5 closes both wounds at once — the channel defends itself against the AI-slop signal, and the editor surface defends already-published work from accidental rewrites. The retention feedback loop turns each published short into a training signal for the next batch, so the channel gets sharper every round.

DaVinci Resolve as the human-in-the-loop layer

Showspring renders broadcast-quality MP4s end-to-end on the VPS. But sometimes a producer wants to nudge a single beat — or the AI’s timing instinct is 90% right and a human editor needs to push two clips fifteen frames left. v2.0 ships the entire timeline into DaVinci Resolve through a five-level integration ladder, all consuming a single canonical JSON contract and a portable OTIO bundle.

📄 Single manifest contract

Every consumer reads the same versioned JSON manifest — clips, durations in frames, kind, tracks, ordered items, volume keyframes. Never references absolute paths, so the same bundle unpacks anywhere. Stamps opaque Showspring row identifiers into Resolve markers for the future round-trip flow.

🍾 Five levels of integration

Level 0 server-side OTIO bundle (NLE-agnostic). Level 1 Lua importer (free Resolve). Level 2 local Python daemon bound to loopback only. Level 2.5 same daemon, outbound WebSocket back to Showspring. Level 3 Workflow Integration panel inside Resolve. Each level is a complete shipping path on its own.

📦 Portable OTIO bundle

Built entirely on the VPS. Frame-accurate accumulation walks the timeline as integer frames so an 8-minute episode doesn’t drift. ProRes 4444 stills for image overlays. A volumes.json sidecar so non-Resolve NLEs can recover audio levels. Image-sequence detection defeated by giving overlays alphabetical-only IDs.

🔒 Two delivery channels

Authenticated download for the user’s browser session. An unguessable one-time token for the local daemon (no session cookie possible). Token minted at build time and discarded on re-export.

💻 Python tray daemon

Long-running Windows tray process. pystray for the icon (replaced PowerShell after Defender heuristics flagged the script as a remote-control toolkit). Polls a local health endpoint. Three states: ok / warn (Resolve unreachable) / down. Owns the outbound WebSocket too.

🎯 Volume duality

OTIO has no native audio-levels schema. Volumes ship on two channels: per-clip OTIO metadata embedded in the timeline, plus a volumes.json sidecar in the bundle root. Daemon applies via Resolve’s undocumented TimelineItem.SetProperty("Volume", dB), with the sidecar as the recovery path when SetProperty no-ops on certain Studio builds.

🔗 Round-trip via markers

Every clip in the bundle ships with a Resolve marker carrying an opaque Showspring identifier in its custom_data field. Resolve’s GetMarkerByCustomData() call lets a future flow walk an editor-modified timeline, recover the IDs, diff against the original, and write timing changes back to Showspring’s database. Infrastructure shipped; the round-trip flow is next.

🛠 Resolve API survival kit

recordFrame is absolute (offset by GetStartFrame()). CreateEmptyTimeline collides on name. DeleteTimelines is a no-op (use DeleteClips). SaveProject() mandatory or work evaporates. 1V/1A default needs AddTrack before high trackIndex. Integration code wraps each gotcha so the upstream call sites stay clean.

Further reading: The full story — the manifest contract, the tempfile-trap that broke the first daemon, the Content-Length hang in Python’s stdlib HTTP server, the Defender false-positive that forced the rewrite, and the “don’t kill the tray” self-kill bug — is written up in the Building the DaVinci Resolve Integration deep-dive on overdigital.ai.

iPad & PC Image Watcher

A dual-source image pipeline that monitors Google Drive (for iPad drawings) and a local PC folder simultaneously. New images are automatically detected and queued for approval — no manual upload needed. Combined with start/end image slots, this enables a smooth hand-drawn-to-AI-video workflow.

Dual Source Monitoring

Google Drive polling (every 10s) for iPad-sourced images plus browser-based local folder watching (File System Access API) for PC files. Both feed into the same approval queue with LED status indicators.

Approval Workflow

Every detected image queues for visual approval: side-by-side comparison of current vs. new image, with options to set as start image, end image, or discard. A visual clip picker grid allows reassigning to any scene.

Start & End Images

Each scene now supports separate start and end frames for image-to-video generation. Swap, edit, or AI-modify either image independently. The video engine uses both to produce smoother motion between key frames.

Drive Export

Export character references, location images, and scene references to Google Drive per clip — creating organized folders for external AI tools or team collaboration.

Grok 4 deep-research scripts

A new script generation mode that leverages Grok 4’s live web search to produce fact-heavy, current-events scripts. Instead of relying solely on the show bible, Research & Debate mode conducts real-time web research on the episode topic, then generates scripts grounded in verified facts and recent developments.

Live Web Research

Grok 4 searches the web in real-time for the episode topic, pulling current facts, statistics, and developments. The research context is injected directly into script generation for factual accuracy.

Debate-Style Dialogue

Characters engage in informed discussion with real data points. The show bible context ensures characters stay in-character while discussing factual content, producing educational yet entertaining scripts.

Configurable LLM models per step

v1.1 introduces per-step model selection. Choose which LLM powers each creative stage — Episode Ideas, Episode Scripts, Shorts Ideas, and Shorts Scripts can each use a different model. Switch between local llama.cpp models (zero API cost) and cloud models (Gemini, Claude) depending on your quality and speed requirements.

Episode & Shorts Ideas

Choose from Gemini 2.5 Flash, Llama 3.1, Gemma 12B, Qwen 8B, or Claude. Local models run free on the RTX 5090; cloud models deliver higher quality for production batches. The model selector appears directly in the Idea Lab.

Episode & Shorts Scripts

Script generation supports the same model selection. Each model brings a different narrative style — Gemini excels at concise dialogue, Claude at long-form structure, and local models at rapid iteration.

Where it sits in the market

The AI video market is fragmented into tools that each solve one piece of the puzzle. Generation engines produce stunning clips but can’t build a story. Automated pipelines assemble videos fast but rely on stock footage. Avatar platforms nail corporate presentations but can’t do cinematic scenes. And only one other tool even attempts full episodes — but it’s animation-only. DC Creator spans all of these categories.

Feature-by-feature comparison

We picked the strongest competitor from each category. Every cell reflects publicly documented capabilities as of April 2026.

How it all connects

A hybrid architecture where cloud APIs deliver the highest-quality generation (Veo, Gemini, ElevenLabs) while a local GPU provides open-source alternatives and hardware encoding. The VPS orchestrates everything, and each AI agent is purpose-built for its stage of the pipeline.

Technology deep dive

Every component was built from scratch — no video editing frameworks, no SaaS dependencies, no drag-and-drop website builders. Pure Node.js, vanilla JavaScript, and ffmpeg.

Hybrid Cloud + Local Architecture

Cloud APIs (Google Veo, Gemini) deliver the highest-quality video and image generation, while a local RTX 5090 (32 GB VRAM) provides open-source alternatives and handles NVENC encoding. The system is designed to scale with new cloud models as they become available.

ffmpeg Compositing Engine

Each clip is assembled with complex filter graphs: per-stream volume with keyframe expressions, 4-input amix with explicit weights, sample rate normalization, pad/trim alignment, and cfr frame timing — all generated dynamically per clip.

Multi-LLM Orchestration

Three local models (Llama, Gemma, Qwen) served by llama.cpp via a llama-swap router run in parallel for brainstorming. Cloud APIs (Gemini, Grok, Claude) provide additional perspectives. A judge model synthesizes competing outputs into a final creative decision.

ComfyUI Workflows

Local open-source video models run via ComfyUI on the RTX 5090: WAN 2.2 for image-to-video and LTX 2.3 for longer clips. These complement cloud models like Veo, giving creators the choice between speed, cost, and quality depending on the scene.

Intelligent Cache System

A 5 GB LRU cache on the VPS holds active assets. Google Drive provides permanent storage. Cache eviction never deletes files that haven't been backed up. A scheduled cleanup job runs every 6 hours, backing up unbacked assets before evicting.

Show Bible System

A living knowledge base that grows with every episode. Tracks character arcs, running gags, location details, dialogue patterns, and YouTube analytics. Automatically condensed for local models via Qwen 8B to fit within smaller context windows.

Multi-Platform Social Engine

OAuth 2.0 flows for YouTube, Facebook, Instagram, TikTok, and X/Twitter. Each platform has dedicated publish functions handling format requirements, API quirks, and token refresh. An auto-publisher polls every 60 seconds to fire scheduled posts.

Cross-Platform Analytics

Aggregates views, likes, comments, and shares from all five platforms into a unified dashboard. Daily snapshots build 30-day trend charts. YouTube research reports analyze channel performance, competitor positioning, and optimal posting schedules.

Resend Email Notifications

Branded HTML email alerts fire after every auto-publish: platform badge, clip title, direct link, and dashboard CTA. Missed-schedule alerts notify when a scheduled post fails or is overdue.

Ten AI models, one pipeline

No single model can do everything. The Creator orchestrates specialized models for each phase of production — local where possible, cloud where necessary. Per-step model selection lets each creative stage use a different LLM. v1.2 adds Grok 4 with live web search.

llama.cpp (Llama 3.1 / Gemma 12B / Qwen 8B)

Local LLMs for brainstorming, script writing, and location extraction. Zero API costs, unlimited iterations. Served on the RTX 5090 by llama.cpp behind a llama-swap router for fast model switching.

Gemini + Veo (Google Cloud)

The primary production engine for video (Veo), images, and script generation. Cloud models deliver the highest quality and are the default choice for published episodes.

Grok 4 (xAI)

The creative director’s judge and the Research & Debate engine. Evaluates pitches, conducts live web research, and generates fact-heavy scripts with real-time data.

Claude (Anthropic)

Alternative script writer for episodes that need a different narrative style. Strong at long-form structure and character consistency.

ElevenLabs

Voice synthesis for 7+ characters, each with a unique voice profile. Also generates sound effects and music tracks from text descriptions.

WAN 2.2 / LTX 2.3 (Local GPU)

Open-source video models running on the RTX 5090 via ComfyUI. A cost-effective local alternative for drafts, iterations, and experimentation before committing to cloud renders.

Z-Image-Turbo / SDXL

Fast image generation for scene creation. Sub-2-second generation via ComfyUI with 4-step sampling. Produces photorealistic starting frames.

Gemini 2.5 Flash

Fast, cost-effective model for shorts idea generation, metadata, and schedule recommendations. Serves as the default fallback when local models are unavailable.

Faster-Whisper (Transcription)

Word-level timestamp transcription for accurate chapter generation and subtitle creation. Runs locally on the RTX 5090 for zero-cost transcription.

YouTube + Social APIs

Publishes to YouTube, Facebook, Instagram, TikTok, and X via OAuth. Tracks cross-platform analytics and feeds performance data back into the show bible.

The manual effort this replaces

Showspring is not an API wrapper. It is a production-grade studio that replaces every specialist role in a traditional video content operation with end-to-end AI generation — from initial idea to multi-platform publish. The scale numbers below describe what the system does, and the section that follows describes what it would take to produce the same output by hand.

30K lines of frontend (vanilla JS, zero frameworks) and 19K+ lines of modular Node.js backend (22 routers, 11 services). No boilerplate, no generated scaffolding.

Every production step, every AI model, every platform publish, every analytics query has a dedicated API. OAuth flows for 5 social platforms, progress tracking, error recovery.

Episodes, clips, characters, locations, audio tracks, SFX, music, image history, gallery, platform tokens, publish records, analytics, podcasts, shorts schedules — all with migrations and foreign keys.

Full CI/CD pipeline via GitHub Actions. Every router tested against a fresh database. The test suite caught 9 serious bugs during the April 2026 refactor that the original 22-test suite had missed.

The Opportunity

AI video tools today are single-shot generators. You get a 5–10 second clip with no narrative continuity, no audio design, no character consistency, and no way to assemble it into a publishable episode or distribute it across platforms. The gap between “I can generate a cool clip” and “I can produce and distribute a multi-platform content operation” is enormous — and that gap is the product.

Showspring closes that gap. It orchestrates 10+ specialized AI models into a single end-to-end production pipeline: ideation, script writing, voice synthesis, character-consistent image generation, image-to-video, multi-track audio mixing, render, and multi-platform publish. The entire Doodle Cast YouTube channel — with its episodes, shorts, characters, and growing audience across five platforms — is produced and distributed end-to-end by this tool, with no writers, no animators, no voice actors, no editors, no sound designers, and no post-production house involved.

Manual Production Equivalent

What producing this content would take without AI

Every single episode Showspring ships — idea, script, character art, voice acting, animation, audio mix, thumbnail, multi-platform publish, and analytics — replaces the output of an entire traditional content studio. To produce the same volume and quality manually, a creator would need a cross-functional team across every specialty below, running in parallel, week after week.

Deep technical breakdown

Every design decision in Showspring had a real constraint behind it. This section walks through the shape of the implementation at a high level: module topology, data model, rate-limit strategy, the dynamic render engine, the hermetic test pipeline, the hybrid cloud + local GPU routing, and the non-destructive cache policy. The goal is to show why the system looks the way it does, not to hand out a runbook.

1. Modular Composition Root

The main entry point is a thin bootstrap: environment validation, middleware stack, layered rate limiters, session setup, and router registration. It holds essentially no business logic. All production behavior lives in 22 domain routers and 11 shared services underneath. Each router owns its validation, data access, and error responses; services are pure functional units callable from any router.

2. Layered Rate Limiting

Rate limiting is layered by cost class. A default limiter covers general API traffic. A much tighter limiter applies to cost-sensitive endpoints (LLM calls, image generation, and render dispatch) matched by both literal path and regex patterns. The tightest cap applies to authentication and OAuth callback flows to resist brute-force and credential-stuffing attempts. Static-asset bulk-fetch paths are excluded from API rate limiting. The expensive limiter runs before the global limiter so the global counter still sees every request.

Specific thresholds and endpoint lists are intentionally not published — tuning parameters that affect throttling behavior are treated as internal configuration.

3. Normalized Data Model

The persistence layer is a normalized relational schema grouped into five concerns. The database engine supports concurrent reads during long-running writes (render jobs, image generation), and foreign-key relationships keep referential integrity explicit. Every table is exercised by the integration test suite against a fresh ephemeral database per run.

4. Dynamic Render Engine

Render is not a wrapper around a preset. Every episode, short, and podcast clip is composited by building a filter graph at runtime from the clip's tracks, volume automation, and timing metadata. Four audio streams are mixed per clip with explicit per-stream weights and fade/duck automation, then paired with the video stream and handed to a hardware-accelerated encoder.

Sample-rate normalize

frame rate

H.264 out

Each volume envelope, fade, duck, and weight is generated per clip from the clip's metadata in the database, not hand-authored. The same engine handles episodes, shorts, and podcast clips via a shared filter builder, which is why a 5-second short and a 12-minute episode both render through the same code path.

5. Hermetic Integration Test Pipeline

The test suite is fully hermetic: it boots the real server binary against an ephemeral database, stubs every external AI and platform API, and exercises each router end-to-end over real HTTP. 113 integration cases run on every CI build. Because the runner uses the production code paths, regressions in rate limiting, middleware, and business logic all surface before merge.

6. Hybrid Cloud + Local GPU Topology

The orchestrator delegates GPU-bound work (video generation, image synthesis, encoding, transcription, adaptive streaming prep) to a local GPU host over a private tunnel, while cost-sensitive and highest-quality work flows to cloud AI APIs. A health poller keeps cloud fallbacks warm, so any local outage degrades gracefully rather than blocking the pipeline.

· Frontier LLM inference

· Production image generation

· Voice synthesis

· Platform publishing APIs

· Local LLM inference for drafts

· Fast iterative image generation

· Hardware-accelerated encoding

· Transcription & HLS segmentation

Routing is per-job, not per-user. The same episode can fan out cloud video + local draft image + cloud voice + local encoding based on cost, quality, and availability.

7. Non-Destructive Cache Policy

Cache eviction normally deletes whatever is coldest. That is unacceptable here — a render that has not yet been archived cannot be recreated without re-running the whole GPU pipeline. Eviction therefore follows a strict backup-first order: archive to permanent storage, verify the copy, then reclaim local space. A crash mid-cycle never loses data.

If the remote archive is unreachable during a cleanup cycle, the cache simply grows a little past its cap until the next cycle — which is far cheaper than losing a not-yet-backed-up render.

Why This Matters

None of this is required to “make an AI video.” It is required to run one in production, every day, against rate-limited third-party APIs, on a shared VPS behind a reverse proxy, with a cache that cannot afford to lose files, with a test suite that has to be deterministic because it runs on every change. These are the details that separate a weekend prototype from a production studio shipping AI-generated content on a real schedule.

See it in action

Watch the episodes produced entirely by Showspring on YouTube.

Visit The Doodle Cast ↗