1. System Overview

Create Studio is an AI-powered video ad generator that allows users to upload photos of businesses, arrange them on an NLE-style timeline, add AI-generated voiceover and music, and render broadcast-quality video ads — all from a browser.

The system runs on a hybrid architecture spanning two physical locations: a DigitalOcean VPS in the cloud and a local workstation with an NVIDIA RTX 5090 GPU. The architecture is designed so that every feature works when the GPU PC is offline, with the 5090 providing enhanced performance when available.

Key Design Principles

- Graceful degradation — Every feature works without the 5090. Cloud APIs (ElevenLabs, Veo 3, Gemini) provide fallbacks.

- Transparent switching — The

is5090Online()health check (30s cache) automatically routes to the best available backend. - Zero downtime transitions — The 5090 can go offline mid-session (e.g., user starts sim racing) without breaking in-progress renders.

2. Request Flow & Routing

Every request from the browser follows this path:

Session & Authentication

Multi-user auth with session cookies. POST /auth validates credentials, stores req.session.user. All API routes check requireAuth middleware. Gallery items are tagged per-user for filtering.

// server.js — Auth flow

const USERS = { rick: 'rick', jens: 'jens' };

app.post('/auth', (req, res) => {

const { username, password } = req.body;

if (USERS[username] === password) {

req.session.user = username;

res.json({ success: true, user: username });

}

});3. GPU Health Check & Service Discovery

The VPS continuously monitors the 5090's availability through a cached health check. This is the foundation of the hybrid architecture — every subsystem queries this before deciding where to route work.

// Cached 5090 health check

let gpu5090Online = false;

let gpu5090LastCheck = 0;

const GPU_CHECK_INTERVAL = 30000; // 30 seconds

async function is5090Online() {

if (Date.now() - gpu5090LastCheck < GPU_CHECK_INTERVAL)

return gpu5090Online;

try {

const r = await fetch(`${IMAGE_API_URL}/health`, {

signal: AbortSignal.timeout(5000)

});

gpu5090Online = r.ok;

} catch {

gpu5090Online = false;

}

gpu5090LastCheck = Date.now();

return gpu5090Online;

}The 30-second cache prevents hammering the health endpoint during burst operations while keeping the state reasonably fresh. The 5-second timeout prevents the check itself from blocking render pipelines.

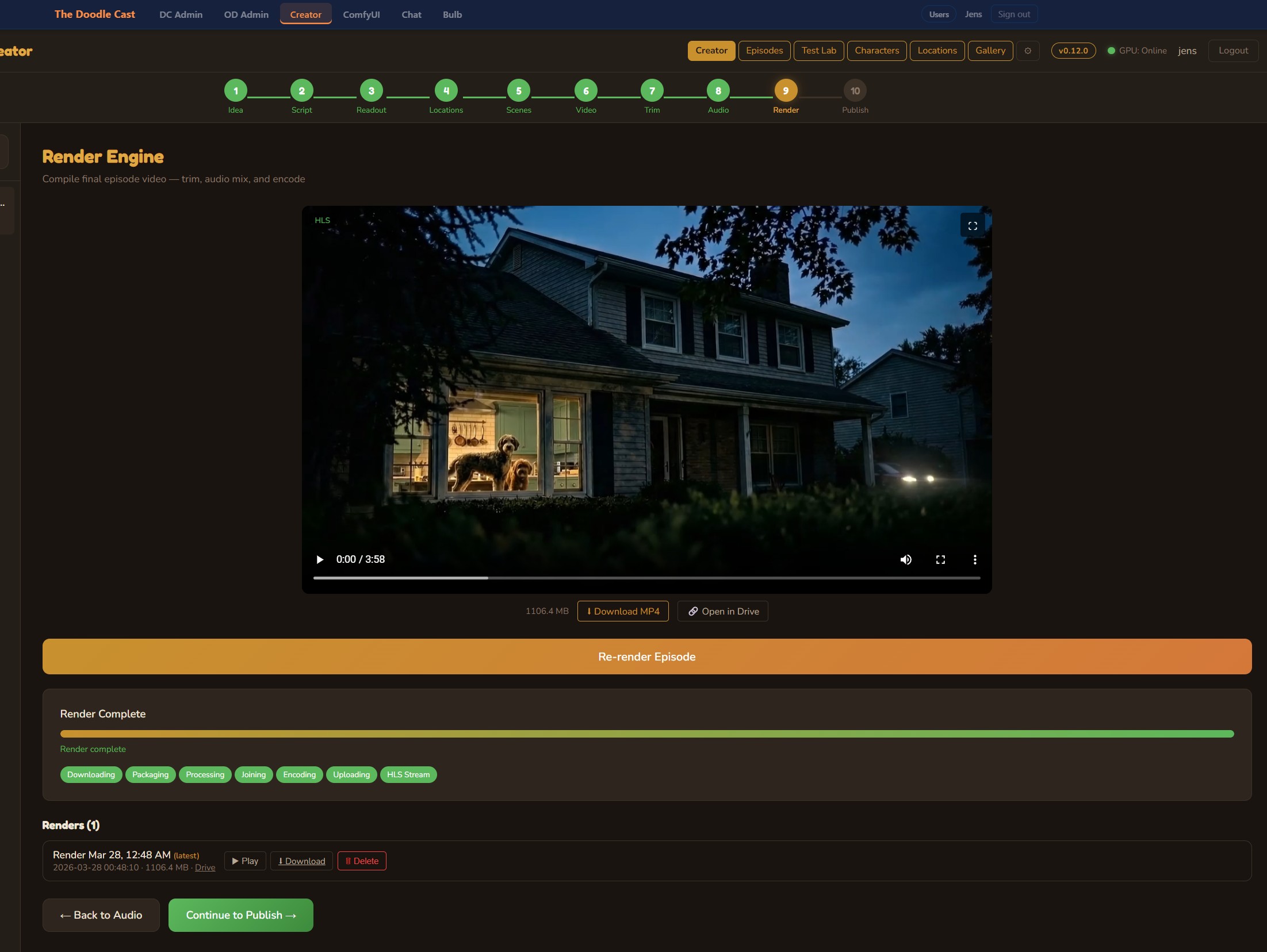

4. Video Rendering Pipeline

The render pipeline is the most complex subsystem. It builds an FFmpeg filter_complex graph from the timeline state, then decides where to execute it based on 5090 availability.

4.1 FFmpeg Offload to 5090

When the 5090 is online, the VPS offloads FFmpeg rendering for dramatically faster encode times (GPU-accelerated vs. VPS CPU). The process works via a fully HTTP-based transfer protocol — no SSH/SCP required:

Font Path Mapping

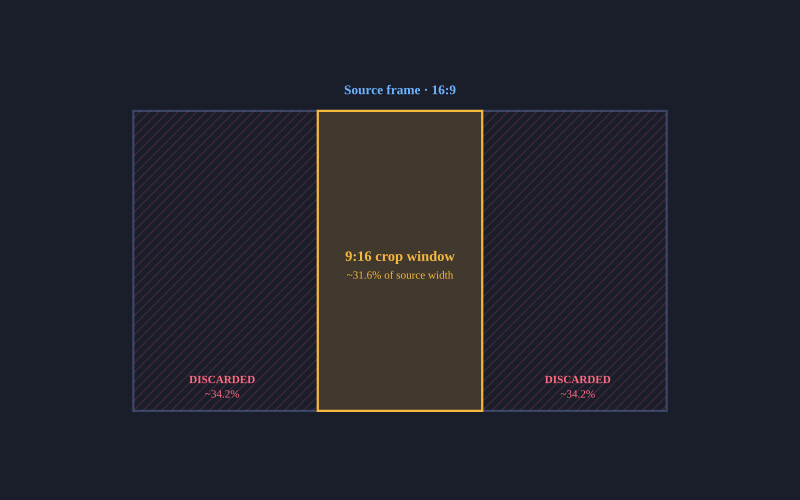

The VPS builds filter_complex strings with Linux font paths. Before sending to the 5090, these are mapped to Windows equivalents with escaped colons (since : is a delimiter in FFmpeg filter syntax):

const WIN_FONT_MAP = {

'/usr/share/fonts/.../OpenSans-Bold.ttf':

'C\:/Windows/Fonts/arialbd.ttf',

'/usr/share/fonts/.../DejaVuSerif.ttf':

'C\:/Windows/Fonts/times.ttf',

// ... 12 mappings total

};5. Hybrid Music Generation

Music generation uses a dispatcher pattern that routes to the best available engine based on user preference and 5090 availability.

- Free, unlimited generations

- Better quality for longer tracks

- Lazy-loaded on first request (~60s)

- Retry loop: 7 attempts, 15s intervals

- Output: WAV, variable length

- Pay-per-generation

- Always available, no warmup

- Max ~22 seconds per generation

- API: POST /v1/sound-generation

- Output: MP3, fixed duration

6. AI Video Clip Generation

Create Studio supports three clip modes: Ken Burns (zoom/pan on static images), AI Image-to-Video (I2V), and AI Text-to-Video (T2V). The AI modes use different backends based on availability:

| Model | Location | I2V | T2V | Duration | Availability |

|---|---|---|---|---|---|

| Google Veo 3 | Cloud (Google API) | ✓ | ✓ | 4-8 seconds | Always |

| Wan2.1 14B | Local (5090 GPU) | ✓ | ✓ (via SDXL) | ~5 seconds | 5090 only |

The UI dynamically shows/hides the Wan2.1 option based on 5090 status. When offline, clips default to Veo 3 and the "Advanced" toggle is hidden. The VPS can call Veo 3 directly via the Google Generative AI REST API without needing the 5090.

7. LLM Script Generation

Voiceover scripts and ad copy are generated via LLM with a two-tier fallback:

async function callLLM(prompt, timeoutMs = 15000) {

// Try Ollama first (local, free, fast when available)

try {

const controller = new AbortController();

const timer = setTimeout(() => controller.abort(), 8000);

const res = await fetch(OLLAMA_URL, { ... });

clearTimeout(timer);

if (res.ok) return (await res.json()).response?.trim();

} catch {}

// Fallback: Gemini API (always available)

try {

const res = await fetch(

`https://generativelanguage.googleapis.com/v1beta/

models/gemini-2.0-flash:generateContent?key=${KEY}`,

{ method: 'POST', body: JSON.stringify({ contents: [{ parts: [{ text: prompt }] }] }) }

);

if (res.ok) return data?.candidates?.[0]?.content?.parts?.[0]?.text?.trim();

} catch {}

return null;

}8. Job Lifecycle & Data Model

// Job data shape (data/jobs.json)

{

id: "uuid",

user: "jens",

brandName: "Joe's Pizza",

status: "processing", // pending | processing | done | error | paused

step: "Rendering on 5090 GPU PC...",

progress: 67, // 0-100

outputUrl: "/videos/uuid.mp4",

error: null,

createdAt: "2026-03-13T...",

completedAt: null,

comment: "",

rating: 0,

driveFileId: "1abc...",

driveFileSize: 45000000,

warnings: [] // AI clip fallback warnings

}9. NLE Timeline Editor

The editor UI follows a traditional non-linear editing layout with a media library panel and a multi-track timeline:

10. Deployment & Infrastructure

| OS | Ubuntu 24.04 LTS |

| Runtime | Node.js + PM2 (cluster) |

| Proxy | Apache 2.4 + SSL |

| Port | internal |

| Domain | create.overdigital.ai |

| Storage | Google Drive (OAuth2) |

| Deploy | SCP + PM2 restart |

| OS | Windows 11 |

| GPU | NVIDIA RTX 5090 (32GB) |

| Runtime | Python Flask + CUDA |

| Port | internal |

| Tunnel | Cloudflare (images.*) |

| Startup | Windows Scheduled Task |

| FFmpeg | Shared build in PATH |

File Transfer Protocol

All file transfers between VPS and 5090 use HTTP — no SCP/SSH required from the 5090 side. This avoids issues with SSH key access in Windows Scheduled Task contexts:

11. Technology Stack

- Vanilla HTML/CSS/JS (single file)

- ~7000 lines in index.html

- CSS custom properties for theming

- HTML5 Drag & Drop API

- Web Audio API for previews

- Node.js + Express

- PM2 (cluster mode)

- express-session

- Google APIs (Drive, Veo 3)

- FFmpeg (fallback render)

- Python Flask

- PyTorch + CUDA

- diffusers 0.37.0

- ACE-Step, SDXL, Wan2.1

- FFmpeg (primary render)

- ElevenLabs (TTS + SFX)

- Google Veo 3 (video gen)

- Google Gemini (LLM fallback)

- Google Drive (storage)

- Google Places (photo import)

12. Lessons Learned

- SCP doesn't work reliably from Windows Scheduled Tasks — SSH key access differs between interactive and service contexts. HTTP-based file transfer solved this permanently.

- FFmpeg filter_complex and Windows paths don't mix — The

:inC:is a filter delimiter. Escaping with\:is required. - Base64 in JSON has ~33% overhead — Sending 10 photos as base64 turned a 30MB payload into 40MB+. Token-based download URLs reduced the submission payload to 6KB.

- Cloudflare blocks Python urllib — The default

Python-urllib/3.xuser agent triggers Cloudflare's bot detection. A custom User-Agent header fixed 403 errors. - Design for offline-first — Making every feature work without the GPU from the start would have saved significant refactoring. The hybrid fallback pattern (try local → fallback to cloud) should be the default architecture, not an afterthought.

This is a private architecture document for Create Studio. Built by Jens Loeffler. Last updated March 2026.

1 Comment

Join the discussion